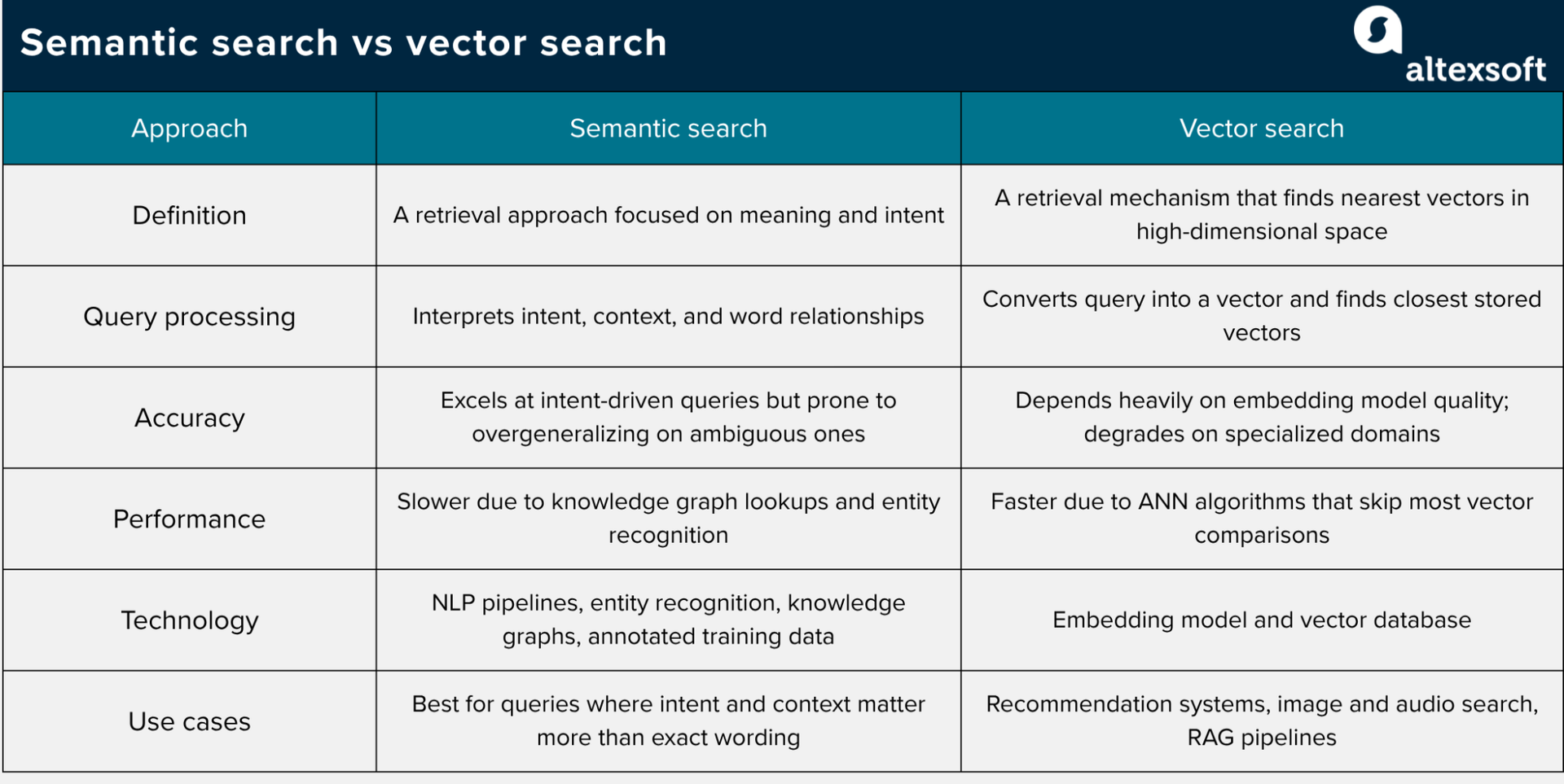

It's easy to come across the terms "semantic search" and "vector search" and assume they mean the same thing. While related, they are distinct; semantic search is a retrieval approach focused on intent-based retrieval, while vector search is one common mathematical index mechanism used to implement it

In this piece, we take a closer look at semantic and vector search and see how they compare across various factors. The table below summarizes the main differences and overlaps.

What are semantic search and vector search? TL;DR

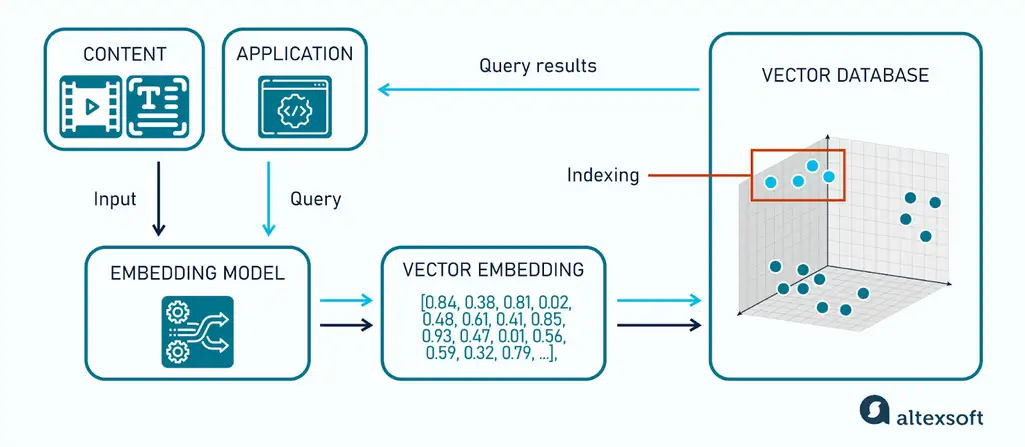

What is vector search? Vector search is a retrieval method that converts data into numerical vectors—points in a high-dimensional space— and finds the closest matches to a query.

How does vector search work? It begins by transforming unstructured data into high-dimensional dense vectors using an embedding model—typically a transformer-based encoder—pre-trained on massive datasets. The vectors are stored in vector databases like Pinecone and ChromaDB. When a query comes in, the same embedding model converts it into a vector. The vector database or search index compares the query vector with stored vectors using a similarity or distance metric such as cosine similarity, dot product, or Euclidean distance. To maintain sub-second latency at scale, systems implement ANN algorithms, with HNSW being the current industry standard for balancing recall and speed.

What are the limitations of vector search? Its accuracy depends heavily on the embedding model. General-purpose models degrade on specialized domains, and HNSW indexes are memory-intensive at scale.

What are the use cases of vector search? Multimodal retrieval (image/audio), cross-selling engines in recommendation systems, and RAG pipelines for unstructured enterprise data, and any application that needs to find similar content across large datasets, regardless of data type.

What is semantic search? Semantic search is a retrieval approach that understands user intent rather than matching exact words. It returns relevant results even when the query and the document don't share the same terms.

How can semantic search use vector search? In many modern implementations, semantic search uses embeddings and vector retrieval: the system converts the query and the indexed content into vector representations, then retrieves the nearest matches. Some semantic search systems add NLP pipelines, entity recognition, reranking, or knowledge graphs to improve intent interpretation and result quality.

Why does semantic search matter? Traditional lexical systems like BM25 and TF-IDF rank documents based on term overlap, so they are strongest when query terms appear in the document, but they usually struggle with synonyms and paraphrases unless expanded with additional techniques. A search for "affordable laptops" would miss a document about "budget notebooks" entirely. Semantic search closes that gap by understanding the relationship between terms, not just matching them.

What are the limitations of semantic search? The extra layers add implementation complexity and more points of failure. It also struggles with ambiguous queries—when a term has multiple meanings, the system can overgeneralize and return loosely related results. A search for "python" without sufficient context can lead to polysemic ambiguity, where the system may prioritize the biological organism over the programming language if the underlying embedding space is not fine-tuned for tech-specific applications.

What are the use cases of semantic search? Best for queries where intent and context matter more than exact wording.

Semantic search vs vector search: how they compare

While semantic and vector search are not direct alternatives, understanding how they differ across specific factors still matters, especially when deciding how to architect your search system.

Query processing

Semantic search interprets a query by analyzing intent, context, and word relationships. A query like “best running shoes for bad knees” is understood as a health and comfort problem.

Vector search is more mechanical. The query is converted into a vector by the same embedding model that indexed the data. The vector database then finds the stored vectors closest to the query vector.

Accuracy

Semantic search can go deeper on intent by layering in knowledge graphs and entity recognition. That depth comes at a cost because each additional layer adds implementation complexity and more points of failure. Semantic search also struggles with ambiguous queries. When a term has multiple meanings depending on context, the system can overgeneralize and return results that are loosely related but miss what the user actually needed.

Vector search accuracy depends heavily on the embedding model. When the model is strong and the domain matches what it was trained on, it handles synonyms and paraphrasing well. However, general-purpose models tend to degrade in specialized domains. A 2025 study in MedTech found that "vanilla" embedding models often suffer from "semantic drift" compared to domain-specific lexical models (such as BM25 tuned for ICD-10 codes) when handling highly technical, structured clinical documentation.

Performance

For semantic search, the additional components, such as knowledge graph lookups, entity recognition, reranking, or query expansion, can improve relevance but may also add latency and operational complexity. Each layer adds processing on top of the base embedding inference. The costs can quickly add up, especially when you factor in scale, because compute and infrastructure requirements increase as your dataset grows.

Vector search can be fast at scale when it uses approximate nearest neighbor indexes such as HNSW, which avoid exhaustive comparison with every stored vector. These algorithms navigate a multi-layer graph structure to skip most comparisons and zero in on the nearest results, typically returning near-exact results in milliseconds.

However, as with all things, that speed comes with its own infrastructure cost. Embedding inference adds roughly 20–100ms per query, depending on model size, and HNSW indexes live in memory. A collection of 100 million 768-dimension vectors (using FP32) requires approximately 307GB of raw memory, though the final memory usage often doubles once you factor in the HNSW graph overhead. Teams working at that scale usually apply quantization—a technique that compresses vector values from 32-bit floats to smaller representations like int8 or binary—to compress vectors and cut memory at a small accuracy cost.

Technology

Many modern semantic and vector search systems use transformer-based embedding models, but vector search can work with any embedding model and any data type that can be represented as vectors. Where they differ is in the additional components they need to function.

Semantic search combines NLP pipelines and entity recognition, and more sophisticated implementations may include knowledge graphs that map relationships between concepts. Building this well requires high-quality, well-annotated training data, which can be hard to source if the domain is specialized or the dataset is small. At the end, you end up with a more complex system with more moving parts to maintain.

Vector search can be simpler to start with: you need a way to generate vectors, store them, index them, and query them. However, it can become complex in production, as you’ll need to handle chunking, metadata, filtering, index tuning, evaluation, and updates.

Use cases

Semantic search is best suited when intent and context drive the query. For example, customer support tools can use it to match a user's question to the right answer even when the phrasing doesn't match the documentation, while enterprise knowledge bases can pull up relevant internal content without employees needing to know the exact terms a document uses.

Vector search has a wider range of applications because it works on any data that can be embedded, including text, images, audio, and code. Recommendation systems use it to find items similar to what a user interacted with, comparing feature vectors rather than descriptions. Image and audio search use it to find visually or acoustically similar content without relying on tags or filenames. RAG pipelines depend on it to pull the most relevant chunks from a document store before passing them to an LLM.

Where semantic search and vector search overlap

In practice, most semantic search implementations run on vector search under the hood. In embedding-based semantic search, the embedding model represents meaning, while the vector index retrieves nearby vectors. Other components, such as lexical search, metadata filters, and rerankers, may also shape the final results.

That said, semantic and vector search aren't interchangeable. A vector search pipeline is not necessarily semantic just because it uses vectors; it becomes semantic when the vectors are designed to represent meaning, and the retrieval task depends on that meaning. What makes it semantic is the intent behind the retrieval, not the mechanism itself.

With a software engineering background, Nefe demystifies technology-specific topics—such as web development, cloud computing, and data science—for readers of all levels.

Want to write an article for our blog? Read our requirements and guidelines to become a contributor.