Vector databases have become an invaluable part of many modern AI systems, powering everything from AI chatbots and recommendation engines to fraud detection systems and intelligent document search.

In this article, we will explore some of the most popular and widely adopted solutions in the market, namely Chroma, Pinecone, Qdrant, Milvus, Weaviate, pgvector, MongoDB, and FAISS, to see how they compare so you can find the right fit for your stack.

The table below summarizes the key insights we’ll cover in detail.

Vector databases compared

A quick primer on vector databases

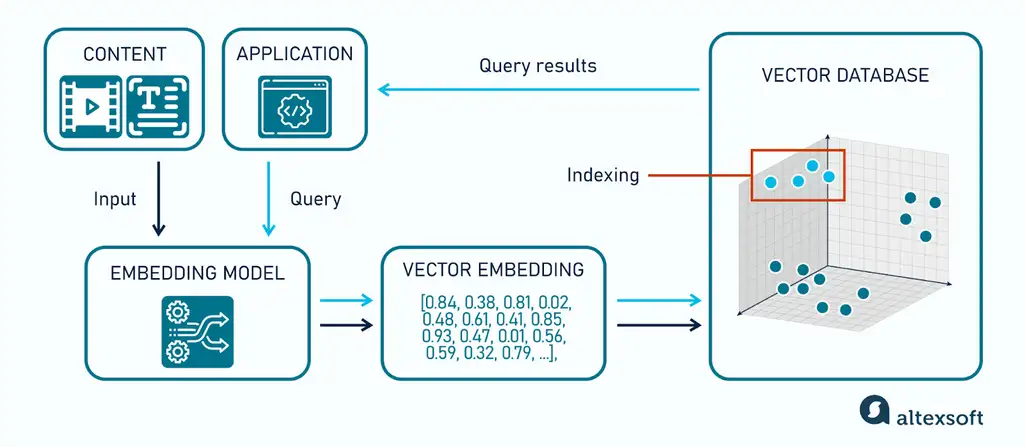

Vector databases store, manage, and query mathematical representations of unstructured data—such as text, images, and audio. These representations, known as vector embeddings, are sequences of numbers that capture the meaning and characteristics of a data point in relation to others. As a result, similar items end up close together in a multidimensional space, while dissimilar ones are placed further apart.

Representations in the database are generated by an embedding model. When a query comes in, the same model converts it into a vector, and the database then performs a similarity search—using distance metrics like cosine similarity or Euclidean distance—to find the closest matches. To keep this efficient at scale, vector databases rely on approximate nearest neighbor (ANN) indexing techniques that avoid comparing the query against every stored vector.

That’s how a vector database works at a high level. Now, let’s look at the key factors to consider when choosing the right platform for your business.

Factors to consider when choosing a vector databas

Before we explore the vector solutions in detail, here are some factors to keep in mind; they’ll help you narrow down the right fit for your project.

Retrieval capabilities

How a vector database retrieves results has a direct impact on the quality of what your application returns. The main approaches include

- dense vector search, which finds the closest matches to your query based on semantic similarity;

- sparse search, which relies on keyword-based representations (e.g., BM25) and works well for exact terms, proper nouns, product codes, and domain-specific queries;

- hybrid search, which combines dense and sparse signals in a single query, balancing semantic understanding with keyword precision; and

- metadata filtering, which narrows the search space using structured attributes such as date, category, or location.

While not a retrieval mode itself, reranking is also important. It introduces a second stage after initial retrieval, where a more sophisticated model re-scores and reorders the top results to improve relevance.

Deployment options

Most vector databases can be used in two main ways: self-hosted or managed.

Self-hosted deployment means you run the database yourself—on your own cloud account or on-premise infrastructure. This gives you full control over configuration, scaling, and data, but also means your team is responsible for setup, maintenance, and reliability.

Managed services are hosted by the vendor or a cloud provider. They handle provisioning, scaling, updates, and uptime, so your team can focus on building the application. The tradeoff is higher cost and less control over the underlying infrastructure.

Some tools, like Pinecone or Weaviate, offer fully managed services out of the box. Others, such as pgvector or Chroma, don’t have a native managed offering and are typically deployed either self-hosted or through third-party platforms like managed PostgreSQL services.

Scalability

How many vectors do you need to store and query—both today and over the next 6 to 12 months?

Most modern vector databases can handle millions to tens of millions of vectors with good performance under typical workloads. As you move into the tens of millions, performance increasingly depends on indexing strategies (e.g., HNSW, IVF), hardware resources, and query patterns.

At hundreds of millions and beyond, differences between databases become more pronounced. Some systems are optimized for distributed storage and large-scale workloads, while others are better suited for smaller or mid-sized deployments.

The key is to choose a database that can scale with your needs—but avoid over-engineering for a scale you don’t expect to reach in the near term.

Performance

When evaluating performance, speed is often the first metric people consider—but it’s only part of the picture. Other factors matter just as much.

Retrieval accuracy: A fast database isn’t useful if it returns irrelevant results. In a RAG pipeline, poor retrieval means the LLM works with incomplete or misleading context, which directly impacts output quality.

Concurrent throughput: A system that feels fast in testing can degrade under real traffic. Always benchmark under realistic load, not just single-query scenarios.

Write performance: If your use case involves frequent updates or streaming data, ensure the database can handle sustained write throughput without negatively affecting query latency or index quality.

Public benchmarks like ANN Benchmarks and VectorDBBench are useful starting points. However, results vary depending on datasets, index configurations, and hardware, and vendor-reported benchmarks are often optimized for favorable conditions. Always validate performance using your own data and workload.

Available index types and flexibility

The index type a database uses determines how it organizes vectors internally, which directly affects query speed, memory usage, and retrieval accuracy.

There are dozens of indexing approaches, and new variants continue to emerge. Some of the most widely used include:

- HNSW (Hierarchical Navigable Small World): The most commonly used ANN algorithm, offering fast queries and high recall. It typically keeps the index in memory, which can become expensive at scale.

- IVF (Inverted File Index): Partitions vectors into clusters and searches only a subset of them. This reduces memory and compute requirements but may require careful tuning (e.g., number of clusters) to maintain recall.

- DiskANN: Designed for datasets that exceed available RAM, storing most of the index on disk while still delivering low-latency search.

- FLAT (brute-force): Performs an exact search across all vectors, guaranteeing perfect recall, but is only practical for small datasets or offline evaluation due to its computational cost.

Resources like ANN Benchmarks provide a useful starting point for comparing how these algorithms perform across real-world datasets. However, results vary depending on configuration and hardware, so testing in your own environment is essential.

Not all workloads are the same, and no single index fits every case. Databases that support multiple index types give you the flexibility to

- scale from in-memory setups to larger, disk-based ones;

- balance speed and accuracy based on your needs; and

- adapt to different workloads and filtering requirements.

In practice, this means you’re not locked into a single performance tradeoff as your system evolves.

Ease of integration and existing infrastructure fit

Even the best database isn’t worth choosing if integrating it creates friction with your current system. Before committing to a solution, make sure it:

- supports your embedding provider or lets you easily bring your own embeddings;

- offers pre-built integrations with popular embedding models, AI frameworks, and orchestration tools—reducing the need for custom glue code;

- integrates with the AI frameworks your team already uses (e.g., RAG or agent frameworks);

- provides well-designed APIs and SDKs in your preferred languages;

- has clear, comprehensive documentation; and

- offers reliable support—either through an active community or vendor-backed channels.

Working through this list upfront will help you avoid integration gaps and costly rework later.

Price structure and total cost of ownership

Comparing costs across vector databases is rarely straightforward, as providers use different pricing models.

Usage-based pricing, such as Pinecone, charges based on factors like storage, queries, and compute usage. This makes it easy to get started, but costs can grow quickly as usage scales.

Hybrid pricing (subscription + usage), used by platforms like Chroma Cloud, combines a base monthly plan with usage-based charges on top. This provides predictable baseline costs while still scaling with usage.

Resource-based pricing, used by platforms like Qdrant Cloud and Weaviate Cloud, ties cost to allocated resources such as CPU, memory, and disk (i.e., cluster size). This is often more predictable for steady workloads but can be less efficient when resources are underutilized or traffic is highly variable.

Self-hosted setups (e.g., with Milvus or pgvector) don’t have licensing fees, but incur infrastructure and engineering costs that can become significant as systems grow.

When estimating cost, don’t focus only on your current dataset. Model how expenses change as your data volume, query load, and replication needs increase. A solution that is inexpensive at the prototype stage may not remain cost-effective in production.

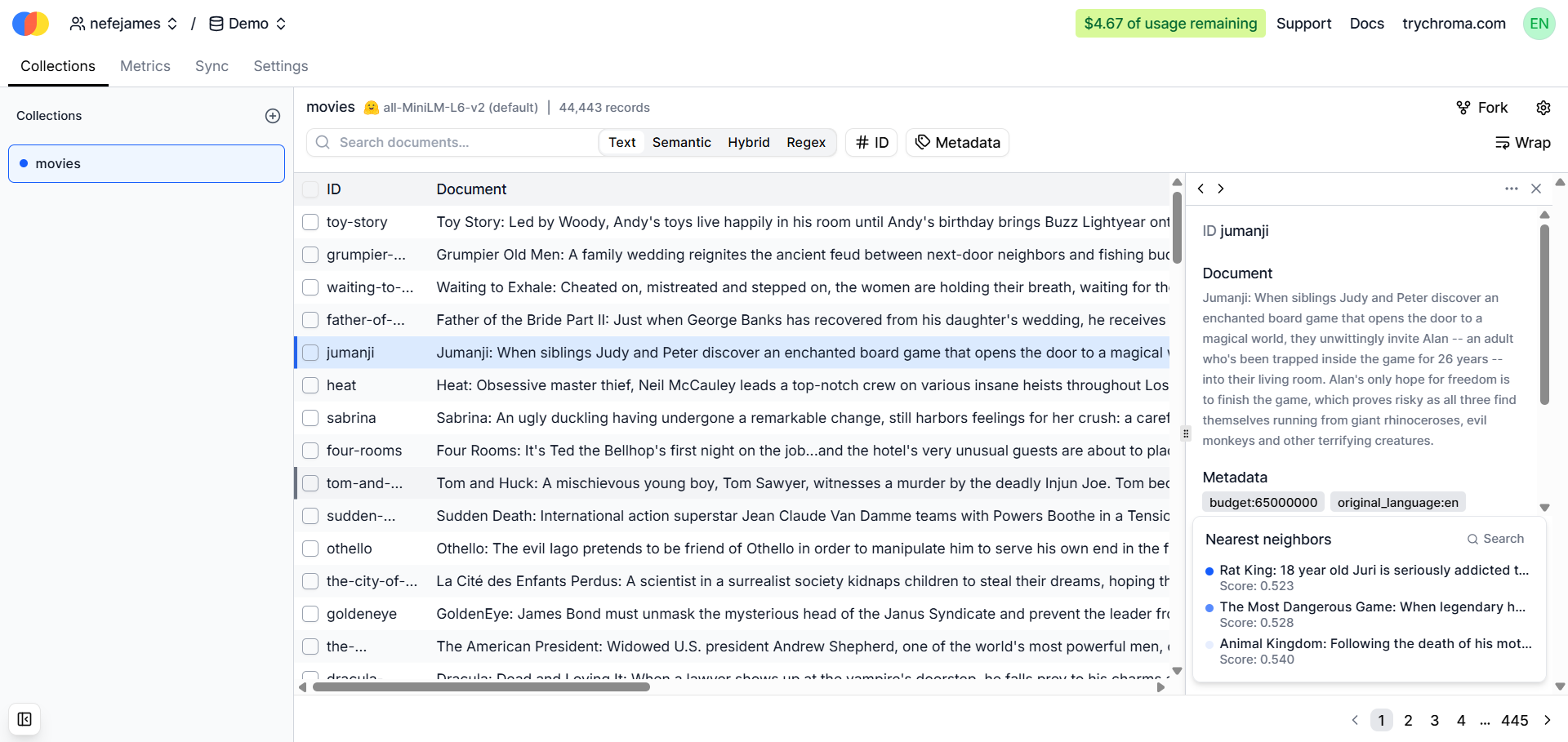

Chroma: Best for prototyping and RAG experimentation

ChromaDB is an open-source vector database built for AI applications, particularly those powered by large language models (LLMs). It’s designed to be lightweight and easy to get started with—you can run it locally in minutes, making it a strong choice for prototyping and early-stage development.

Chroma uses HNSW as its primary index in single-node (self-hosted) deployments. In distributed environments like Chroma Cloud, it relies on SPANN, a scalable ANN indexing approach optimized for large datasets and disk-based storage. In practice, index selection is tied to the deployment model and largely abstracted away from the user.

Chroma Cloud introduces a Search API that centralizes retrieval operations—combining vector search, filtering, and ranking logic—so you don’t have to orchestrate these steps manually in your application.

Deployment option

Since Chroma is open source, you can deploy it in several ways depending on your needs.

In-process (embedded mode): Run Chroma directly inside your application with no separate server. This is the fastest way to get started and is ideal for local development and prototyping.

Persistent local storage: Store data on disk so it survives restarts. This works well for development and small-scale or single-node production use cases.

Client–server mode (HTTP): Run Chroma as a standalone server and connect to it over HTTP, enabling multi-user access and separation between application and database.

For teams that don’t want to manage infrastructure, Chroma Cloud is the managed option. It abstracts deployment and scaling, so you don’t need to provision or operate servers.

If you’re deploying on Azure, there’s no native managed Chroma service, so you typically run it via containers (e.g., Docker or Kubernetes) or host it on virtual machines.

Scalability

ChromaDB is primarily designed as a single-node database, which works well for prototyping and small-to-medium workloads. As data volume and query traffic grow, resource constraints—such as CPU, memory, and disk I/O—can become limiting factors, especially compared to databases built for distributed scaling from the ground up.

Chroma Cloud abstracts the underlying infrastructure and handles scaling for you, reducing operational overhead. The tradeoff is reduced control: infrastructure details like sharding, replication, and resource allocation are managed internally and are not exposed for fine-grained configuration. For most teams, this is a benefit, but it may be limiting if you need precise control over performance tuning or data distribution.

Performance

ChromaDB performs well for single queries and light workloads, making it a good fit for low-traffic applications or proofs of concept (POCs).

Recent improvements—including a rewritten core with Rust components—have significantly improved performance, especially for concurrent operations and write throughput. However, exact gains depend on workload and configuration, so results can vary.

Under heavier concurrent traffic, performance can become less predictable, particularly compared to systems designed for distributed scaling. As load increases, query latency may vary more depending on resource contention and indexing behavior.

ChromaDB relies on in-memory indexing (e.g., HNSW), which helps keep individual queries fast. However, as datasets grow and approach memory limits, performance can degrade due to memory pressure and increased disk I/O.

If your application expects high concurrency or datasets that approach or exceed available memory, it’s important to benchmark ChromaDB under realistic conditions before using it in production.

Integrations

ChromaDB integrates with 27 popular embedding providers and AI frameworks, including OpenAI, Google Gemini, LangChain, and LlamaIndex, making it easy to plug into existing LLM pipelines. It supports Python natively, with additional support for JavaScript/TypeScript in some integrations.

Extensibility

As an open-source system, ChromaDB is extensible at both the application and system levels. You can plug in any embedding model via a simple interface and customize how the database is deployed or integrated into your pipeline.

In practice, Chroma prioritizes flexibility and developer control, making it well-suited for experimentation and custom RAG workflows.

Pricing

Chroma is open source and free to self-host, with costs limited to your infrastructure and engineering time.

For teams that prefer a managed option, Chroma Cloud offers paid plans that combine a base subscription with usage-based pricing. It includes a free Starter plan, while paid tiers typically begin with a Team plan (starting at $250 per month), with additional costs based on storage, queries, and compute usage.

What actually differentiates ChromaDB

Local-first design: Extremely easy to run and iterate locally without infrastructure setup

Tight Python ecosystem integration: Works seamlessly in notebooks and LLM pipelines

Low operational overhead: No need to think about clusters, sharding, or scaling early on

Fast prototyping: Ideal for experimentation before moving to more scalable systems

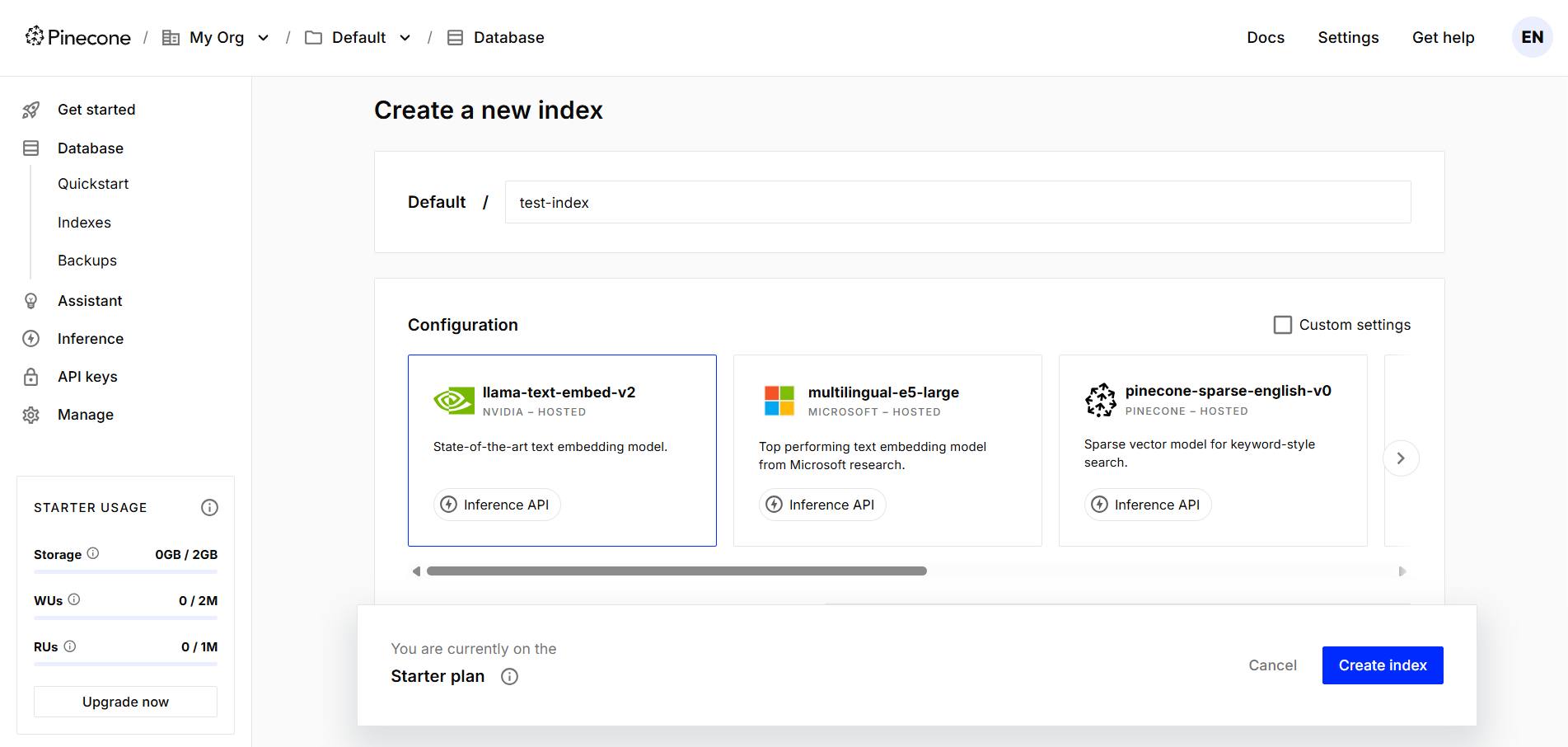

Pinecone: Best for production-scale AI apps

Pinecone is a fully managed vector database designed for production-scale AI applications. It abstracts infrastructure, scaling, and indexing decisions, so you don’t need to configure index types or manage low-level performance tuning.

It comes with built-in reranking capabilities, allowing you to improve result relevance after initial retrieval without integrating separate services. While reranking is typically invoked as a separate step or endpoint, it remains part of the same ecosystem, reducing pipeline complexity.

In addition, Pinecone offers an Inference API that enables you to generate embeddings and rerank results directly within the platform. This reduces the need to rely on external model providers and helps streamline the overall workflow, even though these capabilities are exposed through dedicated endpoints rather than a single unified interface.

For building end-user applications, Pinecone Assistant provides a managed way to create retrieval-based chat experiences on top of your data. It handles document ingestion, embedding, and indexing, simplifying the setup of a basic RAG pipeline. However, for more advanced use cases—such as agent-based workflows or custom orchestration—it is typically combined with external tools.

Deployment options

Since Pinecone is fully managed, you don’t have to provision or maintain infrastructure—it runs in the cloud and handles scaling and operational concerns for you.

For organizations with stricter data control or compliance requirements, Pinecone offers a Bring Your Own Cloud (BYOC) deployment model, allowing you to run Pinecone within your own cloud environment while still benefiting from its managed capabilities.

For local development and testing, Pinecone provides Pinecone Local, a Docker-based, in-memory emulator that lets you build and test applications without connecting to your Pinecone account or incurring usage costs. However, it is not intended for production use, as data is not persisted after shutdown, and it does not replicate full production behavior.

Scalability

Pinecone is designed to scale from small datasets to billions of vectors without requiring users to manage infrastructure directly. Its architecture handles resource allocation and scaling, supporting both early-stage prototypes and production workloads.

For more predictable, high-throughput performance, Pinecone offers Dedicated Read Nodes (DRN) with provisioned resources for read operations. These reduce cold starts and improve latency consistency. Scaling can be achieved by increasing replicas for throughput and availability, or expanding capacity as datasets grow.

As with most managed systems, costs can increase significantly at large scale, so efficient data modeling and query design become more important as your dataset and traffic grow.

Performance

Pinecone is optimized for low-latency vector search at scale, often delivering results in the tens of milliseconds range under typical workloads. Actual latency depends on factors such as dataset size, index configuration, query complexity, and concurrency.

Performance is generally consistent as workloads grow, particularly when using provisioned resources (e.g., dedicated read nodes). However, at larger scales, architecture choices and workload characteristics—such as filtering, query patterns, and data distribution—still have a meaningful impact on latency and throughput.

Integrations

Pinecone offers 50+ integrations spanning five categories: agent frameworks (Haystack and Genkit), data sources (Snowflake and Databricks), cloud infrastructure (Terraform and Vercel), AI model providers (Voyage AI and Jina AI), and observability tools (Datadog and TruLens).

Extensibility

Pinecone is a closed-source platform, so you cannot modify or extend its internal behavior (e.g., indexing algorithms or system architecture). However, it remains extensible at the application level: you can integrate it with different embedding models, orchestration frameworks, and custom pipelines.

In practice, this means Pinecone prioritizes ease of use and abstraction over low-level control—you work within the platform’s capabilities rather than customizing its internal operations.

Pricing

Pinecone uses a usage-based pricing model with a required monthly minimum (starting at $50), effectively combining subscription and pay-as-you-go billing. It also offers a free Starter plan for small-scale applications and testing.

What actually differentiates Pinecone

Fully managed, production-first design: No need to manage infrastructure, scaling, or index tuning—Pinecone handles it all, making it easier to move from prototype to production

Predictable performance at scale: Designed for high-concurrency, real-time workloads with stable latency, even under heavy traffic

Integrated ecosystem (database + inference): Combines vector storage with embedding and reranking APIs, reducing the need to integrate multiple external services

Low operational complexity: Minimal setup and maintenance required, making it suitable for teams without deep infrastructure or ML engineering expertise

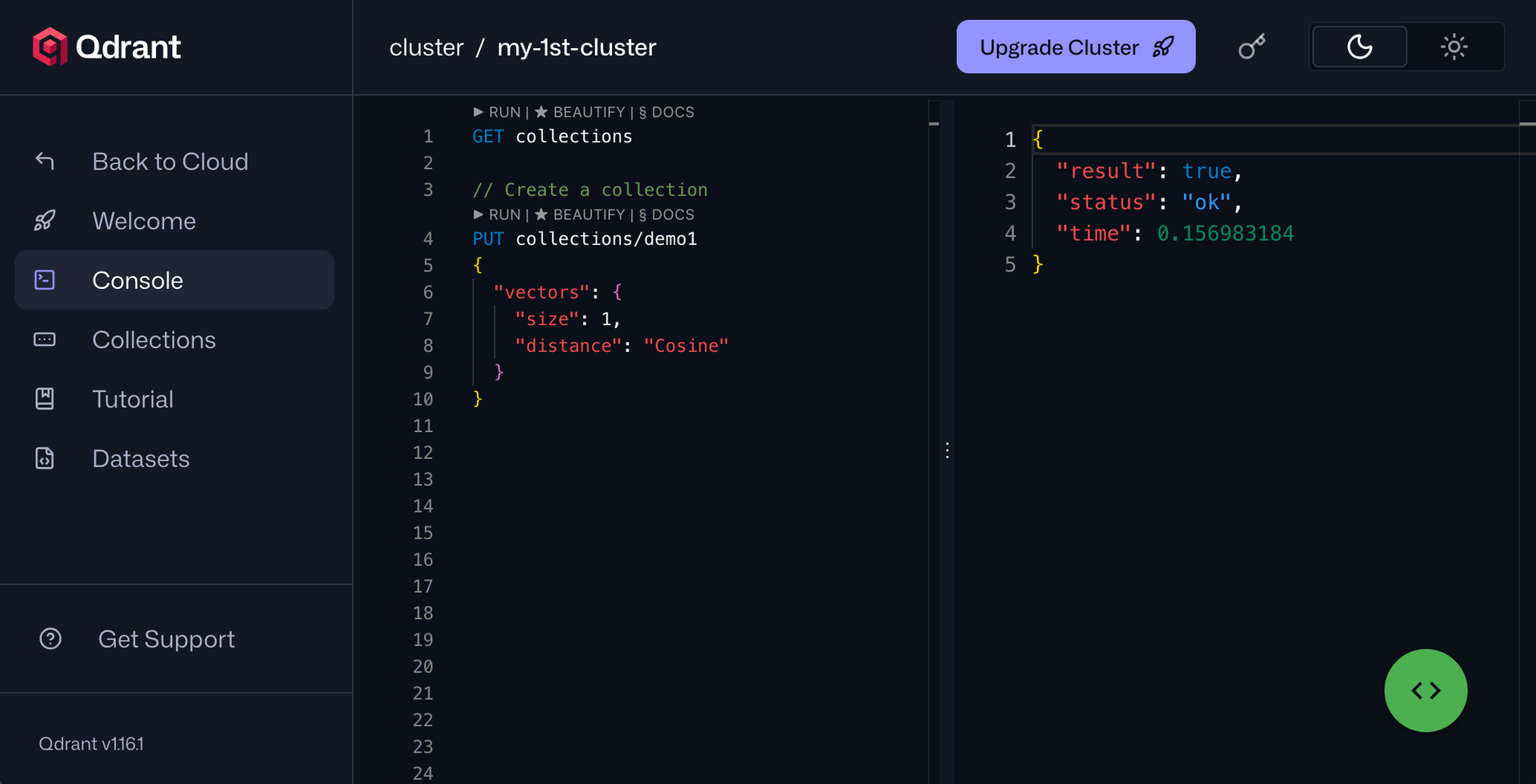

Qdrant: Best for teams that need multiple deployment options

Qdrant is an open-source vector database and similarity search engine written in Rust. Rather than offering multiple index types, it focuses on optimizing a single approach—HNSW—making it highly performant and tunable through custom enhancements and configuration options.

A key strength of Qdrant is payload filtering. Each vector can store a JSON payload with metadata like categories, user IDs, timestamps, or geo-locations. These filters are deeply integrated into the search process, allowing Qdrant to combine semantic similarity with structured conditions (AND, OR, range, geo) in a single, efficient query.

Deployment options

Unlike many vector databases that offer only self-hosted or managed setups, Qdrant provides a broader range of deployment models, giving teams more flexibility in balancing control, compliance, and operational overhead.

Local / self-hosted. Runs Qdrant via Docker or a binary for development, testing, or full production on your own infrastructure.

Qdrant Edge. It’s a lightweight mode designed to run directly on devices (e.g., IoT gateways or offline systems) without requiring a full server setup.

Qdrant Cloud. A fully managed service that handles scaling, updates, monitoring, backups, and availability across major cloud providers (AWS, GCP, Azure).

Qdrant Hybrid Cloud. Lets you deploy Qdrant in your own cloud account or infrastructure while Qdrant manages the cluster. This keeps data within your environment while offloading operations.

Qdrant Private Cloud. Runs entirely within your own Kubernetes infrastructure, with no dependency on Qdrant Cloud. You retain full control over deployment, security, and operations, but also take on full responsibility for maintenance and observability.

This range makes Qdrant suitable for everything from local experimentation to fully isolated, enterprise-grade deployments.

Scalability

Qdrant scales horizontally through sharding and replication, distributing data across multiple nodes while maintaining availability and fault tolerance.

It supports resharding, allowing you to change the number of shards in an existing collection without recreating it. The process is designed to keep the collection available, though it can introduce temporary performance overhead depending on data size and cluster configuration.

In Qdrant Cloud, scaling operations like shard balancing are handled automatically. In self-hosted setups, the same capabilities exist but require manual configuration and operational management.

For multi-tenant workloads, Qdrant supports custom sharding, enabling you to route data to specific shards using a shard key. This provides more control over data distribution, performance isolation, and tenant separation.

Performance

Qdrant is designed for low-latency vector search, using a Rust-based implementation and the HNSW algorithm as its core indexing structure. This enables efficient in-memory operations and fast nearest neighbor retrieval. As with most HNSW-based systems, performance depends on dataset size, hardware, and configuration parameters such as recall and graph settings.

Qdrant performs well for real-time workloads, but in high-concurrency scenarios, some distributed systems, such as Milvus or PostgreSQL with pgvector and additional scaling mechanisms, can achieve 10x higher throughput (queries per second) under heavy load.

For teams that need more control, Qdrant provides tuning options to balance latency, throughput, and accuracy, allowing you to adjust parameters that influence search performance and memory usage.

Integrations

Qdrant offers 70+ integrations across five categories: embeddings providers such as OpenAI and Cohere; frameworks including LangChain and LlamaIndex; Big Data tools such as Apache Airflow and Apache Spark; observability platforms like Datadog and OpenLIT; and automation platforms such as n8n and Make.

Extensibility

Qdrant exposes a REST API with an OpenAPI specification, making it easy to generate clients for most languages and integrate with different frameworks.

However, it does not provide a pluggable architecture for replacing core components like indexing algorithms or storage backends. Customization is primarily done through configuration, API usage, and query-time parameters, rather than extending or modifying the engine itself.

Pricing

Qdrant is free to self-host, while Qdrant Cloud offers a free tier and scales with resource-based pricing (CPU, memory, and storage). Detailed pricing is typically provided upon request.

What actually differentiates Qdrant

Flexible deployment model: Supports everything from local and self-hosted setups to managed, hybrid, private cloud, and edge deployments, giving teams control over infrastructure and data placement

Strong metadata filtering: Payload filtering is deeply integrated into the search process, allowing you to combine vector similarity with rich, structured conditions in a single query

Balanced performance and control: Built in Rust with HNSW at its core, Qdrant offers solid low-latency performance with tunable parameters to optimize for accuracy, speed, or memory usage

Milvus: Best for GPU acceleration and greater index types flexibility

Milvus is a cloud-native vector database written in Go and C++. It is one of the most popular open-source vector databases, with 42k+ GitHub stars. It supports a wide range of index types for dense, binary, and sparse vectors, including FLAT, IVF, HNSW, and DiskANN.

Milvus also supports GPU-accelerated indexing and search via NVIDIA technologies such as CAGRA, a GPU-native graph-based index algorithm that speeds up both index building and query performance when GPU hardware is available.

Among commonly used vector databases, GPU acceleration is relatively uncommon — FAISS also supports it natively, while most others are primarily CPU-based. This makes Milvus particularly well-suited for compute-intensive workloads that benefit from GPU parallelism at scale.

Deployment options

Milvus offers multiple self-hosted deployment modes tailored to different needs.

Milvus Lite is a lightweight version available as a Python package, designed for quick prototyping in local environments such as Jupyter Notebooks or for resource-constrained setups.

Milvus Standalone runs all components on a single machine (typically via Docker), making it suitable for development and small-scale production workloads.

Milvus Distributed is a Kubernetes-based, cloud-native deployment designed for large-scale applications, supporting horizontal scaling to billions of vectors.

For teams that prefer a managed option, Zilliz Cloud—built by the original creators of Milvus—provides a fully managed service with multiple deployment options, including serverless, dedicated clusters, and BYOC (bring your own cloud).

Scalability

Milvus is built for horizontal scaling, with fully separated compute and storage layers that can scale independently based on demand. Query nodes and data nodes scale separately, too, so read-heavy and write-heavy workloads each get the resources they need.

On Zilliz Cloud, scaling is largely handled for you. Compute and storage adjust in response to real-time demand, replicas are synchronized across availability zones for resilience, and teams can configure scheduled scaling policies for predictable workload patterns.

Performance

Milvus is designed for high-performance vector search and can achieve low query latency, often in the single-digit millisecond range for million-scale datasets, depending on configuration and hardware. Its architecture separates storage and compute, using disk-based storage with in-memory caching to handle datasets that exceed available RAM, which helps control costs as data grows.

Milvus is particularly strong in high-throughput scenarios. Its distributed design allows it to handle large volumes of concurrent queries, making it well-suited for applications that need to serve many users simultaneously.

On Zilliz Cloud, performance is further optimized through managed infrastructure and features like AutoIndex, which automatically selects the most appropriate index type and tunes query parameters based on the dataset. Latency can remain low even at large scales, though exact performance depends on deployment configuration and query patterns.

Integrations

Milvus doesn't ship with built-in integrations, and instead provides an extensive library of tutorials covering how to connect Milvus with different third-party tools. However, Zillus Cloud offers 54 integrations across five categories: AI models, data sources, client libraries, observability, and orchestration.

Extensibility

Being fully open source under the Apache 2.0 license, Milvus can be modified at the source level for teams with specific requirements. Since it supports multiple indexing strategies—including HNSW, IVF, and DiskANN you can choose the most suitable approach based on your workload’s accuracy, speed, and memory trade-offs.

That said, getting the most out of Milvus in distributed mode requires solid Kubernetes and distributed systems expertise, as operating and tuning a cluster involves managing multiple components and infrastructure dependencies.

Pricing

Milvus itself is free and open-source, so when self-hosted, you only pay for the infrastructure you run it on.

For teams that want a managed experience, Zilliz Cloud offers a free tier for exploration and development. Pricing follows a hybrid model: serverless deployments rely on usage-based billing (compute, storage, and operations), while dedicated clusters typically start from around $99/month, with additional costs depending on scale and configuration.

A BYOC (Bring Your Own Cloud) option is also available, allowing you to deploy Zilliz Cloud within your own AWS, GCP, or Azure account while still benefiting from managed services.

What actually differentiates Milvus

Cloud-native, distributed architecture: Designed for horizontal scaling from the ground up, with Kubernetes-based deployment supporting billion-scale datasets and high-throughput workloads

Broad index and data type support: Supports multiple index types (e.g., IVF, HNSW, DiskANN) across dense, sparse, and binary vectors, giving flexibility to optimize for different use cases

GPU acceleration capabilities: Supports GPU-accelerated indexing and search (e.g., via NVIDIA CAGRA), making it well-suited for compute-intensive workloads at scale

Ecosystem and maturity: Backed by Zilliz and widely adopted, with a strong ecosystem and a clear path from open-source Milvus to fully managed Zilliz Cloud

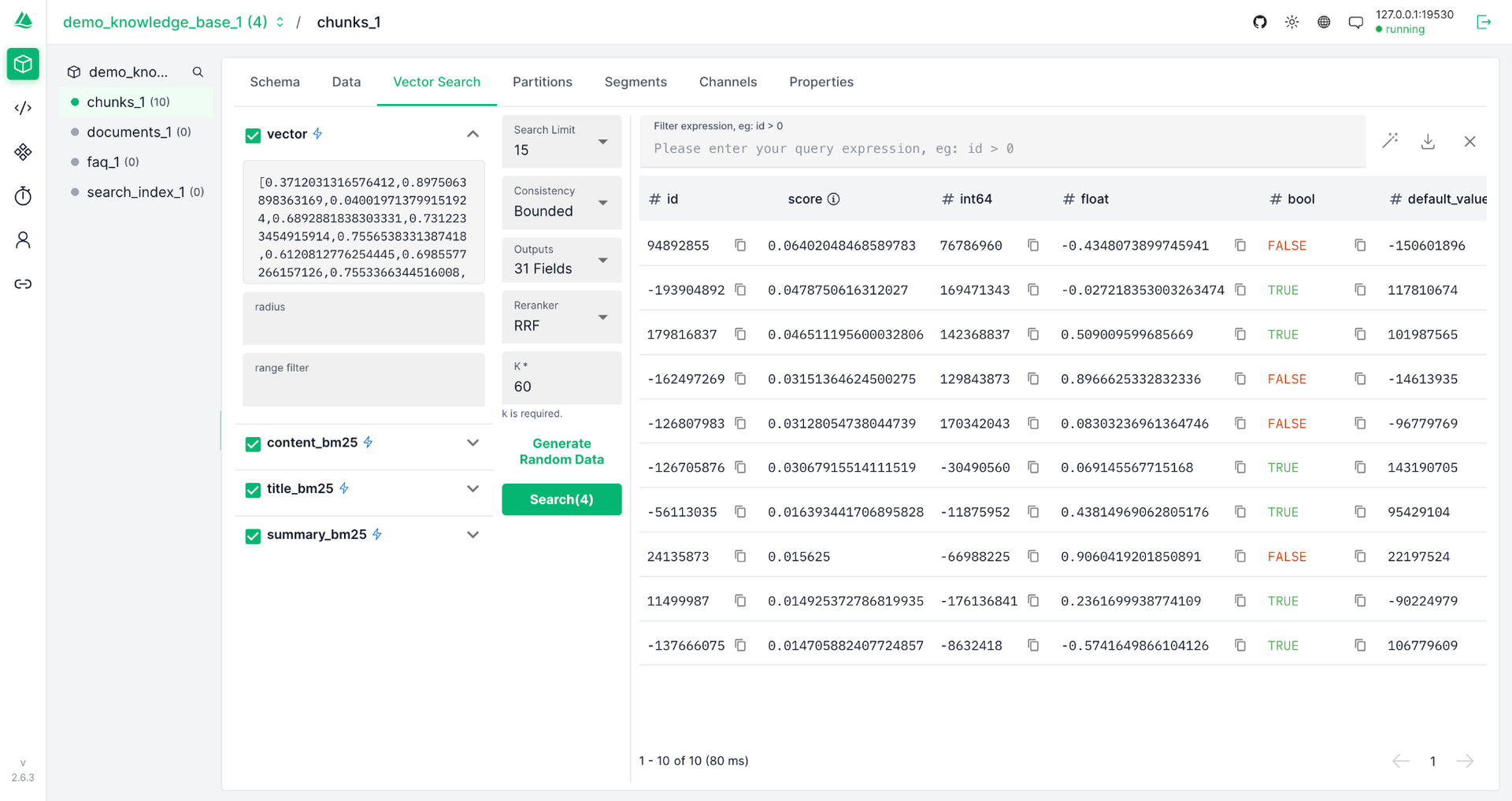

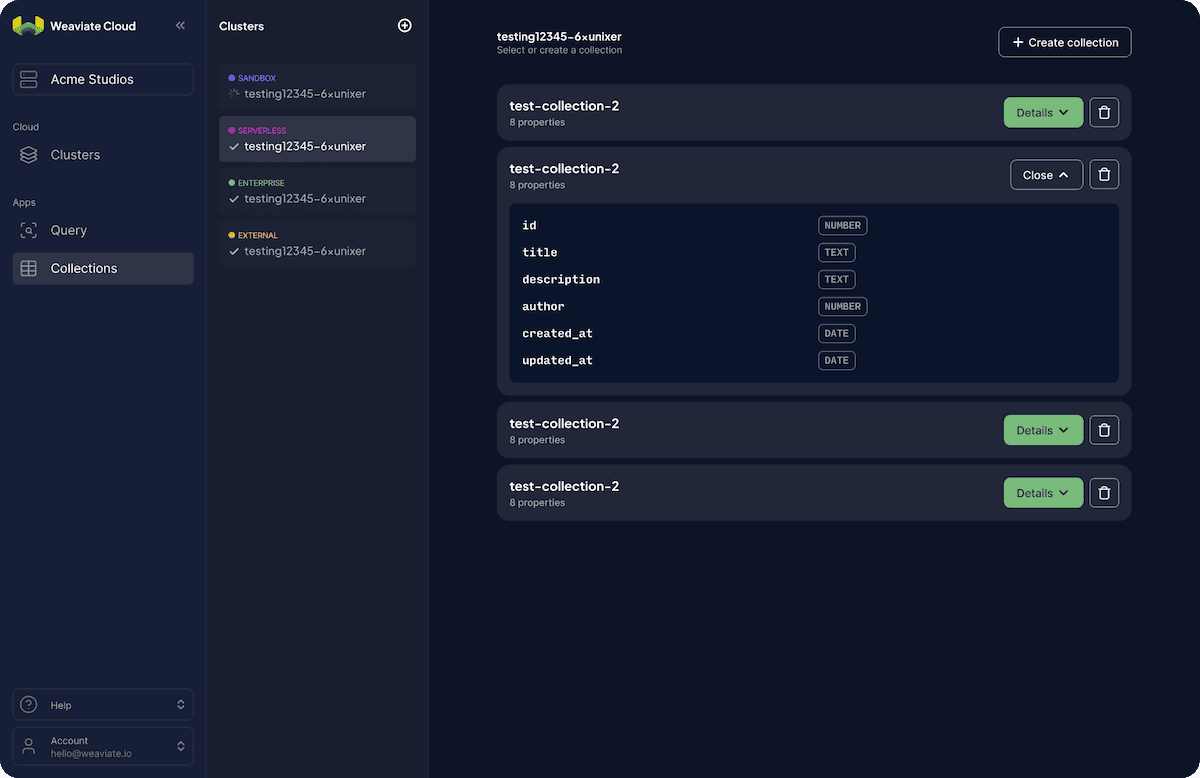

Weaviate: Best for teams that want modularity and built-in AI capabilities

Weaviate, also open-source, is a cloud-native vector database written in Go. It stores both objects and vectors together, allowing you to combine semantic search with structured filtering in a single query.

It supports multiple index types, including HNSW, Flat, Dynamic, and HNSW-based variants. The Dynamic index is particularly useful — it starts as a flat index for small datasets and automatically transitions to HNSW as data grows, reducing the need for manual reconfiguration.

It offers flexible vectorization, letting you configure embedding models at the collection level and update them through configuration rather than code. You can also attach multiple named vectors to a single object, each using a different model, index, and distance metric, enabling you to query the same data from different semantic perspectives.

Weaviate also includes lifecycle management features such as Time-to-Live (TTL), allowing objects to expire and be automatically removed without building custom cleanup logic.

In its managed offering, it provides built-in AI agents for common tasks:

- a query agent for natural language interaction with data,

- a transformation agent for modifying and enriching data, and

- a personalization agent for tailoring outputs based on user-specific context.

Additionally, it offers agent “skills” that help AI coding tools like Claude Code and Cursor better understand and work with Weaviate-specific operations.

Deployment options

For teams that prefer to manage their own infrastructure, Weaviate can be self-hosted using Docker or Docker Compose for local development and testing, or deployed on Kubernetes (e.g., via Helm) for scalable production environments. If you prefer a managed option, Weaviate Cloud handles operations such as scaling, updates, and monitoring, and is also available via AWS Marketplace.

Scalability

Weaviate, which supports sharding and replication strategies, is built for horizontal scaling from the ground up, giving teams granular control over how their deployments grow.

For multi-tenant workloads, each tenant is assigned its own shard within a collection, which provides strong logical and physical isolation at the storage layer and enables fast deletes and independent scaling per tenant.

Weaviate supports lazy shard loading and, in its multi-tenant architecture, lazy segment loading within shards, so data does not all have to be put into memory upfront. This reduces startup overhead and memory usage in large multi-tenant deployments.

Performance

Weaviate’s performance depends heavily on how well you configure it for your workload. Its default HNSW index delivers fast, accurate vector search, but as datasets grow, it can become memory-intensive, making resource planning important. Tuning quantization and index settings can significantly reduce memory overhead while preserving high recall.

Weaviate offers several built-in quantization options, ranging from lighter compression with minimal recall impact to more aggressive approaches that prioritize memory efficiency. Because latency and throughput vary with dataset size, query shape, compression settings, and concurrency, teams with strict performance targets should benchmark Weaviate against their own workloads.

There are several built-in quantization options to manage this tradeoff, ranging from moderate compression that preserves most of the accuracy to more aggressive options for teams that need more memory efficiency.

Integrations

Weaviate offers 60+ integrations across six categories: cloud hyperscalers (AWS, GCP, and Azure), model providers such as Anthropic and Hugging Face, LLM and agent frameworks like LangChain and DSPy, data platforms such as Databricks and Aryn for AI-powered ETL, compute infrastructure tools like Modal and Replicate, and operations and observability platforms such as LangWatch and Ragas.

Extensibility

Weaviate is designed to be extensible through modules—add-ons that integrate into how the database processes data at import and query time. A common approach is to configure existing modules to use different underlying models (e.g., swapping embedding providers) without changing application code, which is useful for rapid prototyping.

For deeper customization, you can build custom modules in Go that integrate with Weaviate’s architecture, for example, by defining how data is vectorized at import or how queries are handled. However, this requires working within Weaviate’s module framework rather than freely modifying core components.

Pricing

Weaviate is free to self-host as an open-source tool, with costs limited to the infrastructure you run it on.

Weaviate Cloud offers pricing that combines subscription-based tiers and usage-based components, depending on the plan and features used. An entry-level Flex plan starts at $45/month. A free trial (e.g., ~14 days) is typically available.

Some advanced features, such as AI-powered agents (e.g., the Query Agent), are offered as paid add-ons, usually with a base monthly fee plus usage-based pricing.

What actually differentiates Weaviate

Unified object + vector model: Stores structured data and vectors together, enabling semantic search with filtering and hybrid (keyword + vector) queries in a single request

Flexible vectorization: Supports multiple embedding models and multiple named vectors per object, allowing you to query the same data from different semantic perspectives without changing application logic

Modular extensibility: Uses a module system to integrate vectorization, reranking, and external AI services, allowing customization without modifying the core engine

Managed AI features: In its cloud offering, it includes built-in AI agents and tools for querying, transforming, and personalizing data, reducing the need for an external orchestration layer

pgvector: Best for teams already running PostgreSQL

pgvector is a PostgreSQL extension that adds vector search capabilities directly to Postgres, effectively enabling it to function as a vector database. It supports multiple index types, including HNSW and IVFFlat, and falls back to exact nearest neighbor search (a full scan) when no index is used, guaranteeing perfect recall at the cost of performance.

While most vector databases rely primarily on approximate nearest neighbor (ANN) search for speed, pgvector supports both approximate (via indexes) and exact search, giving you flexibility depending on accuracy and latency requirements. Exact search is not entirely unique to pgvector, but it is more naturally integrated due to PostgreSQL’s execution model.

A key advantage of pgvector is that it enables combining vector similarity with standard SQL queries. You can apply filters such as category, date range, or user permissions directly in a WHERE clause, enabling tight integration with existing relational data.

Recent versions (e.g., 0.8.0) introduced improvements such as iterative index scans, which can expand the candidate set during query execution to improve result quality when filters would otherwise reduce the number of matches.

Deployment options

Since pgvector is a PostgreSQL extension, you can deploy it anywhere you run Postgres—on-premises, in containers, or on managed services. It is supported by most major managed PostgreSQL providers, including Supabase, Neon, AWS RDS, Google Cloud SQL, and Azure Database for PostgreSQL.

Scalability

You scale pgvector the same way you scale PostgreSQL: vertically by increasing memory, CPU, and storage, or horizontally with read replicas or sharding solutions such as Citus. For many teams with tens of millions of vectors and moderate query traffic, this approach is sufficient.

Beyond that point, the system can start to show limitations. pgvector relies on CPU-based indexing and does not natively support GPU acceleration or disk-optimized ANN indexes such as DiskANN, which some purpose-built vector databases use to handle very large datasets more efficiently. At the billion-scale, workloads typically require more advanced sharding strategies or a move to a dedicated vector database.

Performance

For small- to mid-scale workloads, pgvector performs well, with low query latency at moderate dataset sizes when properly configured.

Where pgvector can fall short compared to purpose-built vector databases is in latency consistency under load. Tail latency—the slowest queries in a distribution—can become more noticeable as concurrency increases, whereas dedicated vector databases are often optimized to keep latency more predictable.

On throughput, pgvector can be competitive at moderate scale, especially when paired with extensions like pgvectorscale that improve parallelism and query performance. The caveat is that such extensions are not available on all managed platforms, so the deployment setup plays an important role.

Integrations

Because pgvector is a PostgreSQL extension, most frameworks that support PostgreSQL can work with it. Object-relational mapping (ORM) tools, query builders, and connection poolers continue to function as usual, although ORM may require raw SQL or extensions to fully support vector operations. In practice, if your stack already talks to Postgres, it can work with pgvector.

Beyond that, frameworks like LangChain and LlamaIndex support pgvector as a vector store backend, allowing you to plug it into retrieval pipelines without building the retrieval layer from scratch. pgvector is also an embedding-model agnostic—you can use vectors generated by providers like OpenAI, Cohere, or Hugging Face, since embeddings are simply stored as vector columns in Postgres.

Extensibility

Since pgvector runs inside PostgreSQL, you can take advantage of the broader Postgres ecosystem, including replication, backups, RBAC, full-text search, and a wide range of extensions. These capabilities are available out of the box as part of Postgres, and pgvector can be combined with other extensions.

Where pgvector is more constrained is in vector-specific behavior at scale. When combining filters with approximate nearest neighbor search, additional tuning is often needed to maintain recall and performance. Likewise, high-ingestion workloads and index performance depend on how well Postgres and index parameters are configured. As a result, while pgvector is highly extensible within the Postgres ecosystem, it offers fewer built-in optimizations for vector-specific workloads than purpose-built vector databases.

Pricing

pgvector is free and open source, so the only costs are your Postgres hosting and infrastructure. For teams that need a managed setup, pgvector is included on virtually every major managed Postgres provider, including AWS RDS, Google Cloud SQL, Azure Database for PostgreSQL, Supabase, and Neon. You pay standard Postgres instance pricing, as opposed to a separate vector database fee.

What actually differentiates pgvector

Native SQL integration: Runs inside PostgreSQL, allowing you to combine vector similarity search with joins, filters, and aggregations in a single SQL query

Exact + approximate search flexibility: Supports both exact nearest neighbor search (full scan for perfect recall) and approximate search via indexes like HNSW and IVFFlat

Seamless use with existing data: Works directly with relational data, so vectors, metadata, and business logic live in the same database without additional infrastructure

Mature ecosystem: Benefits from PostgreSQL’s reliability, tooling, and extensions, making it easier to integrate into existing systems without introducing a new database

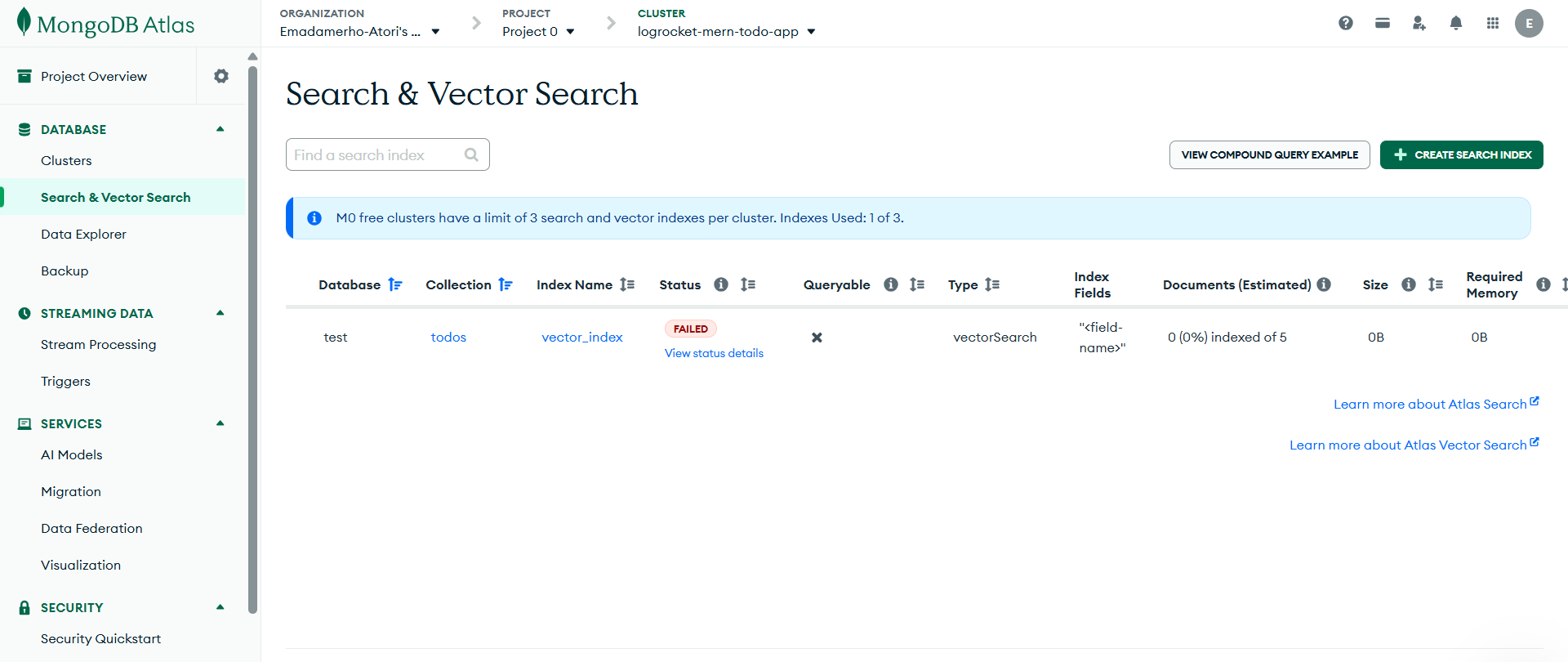

MongoDB Atlas Vector Search: Best for teams already on MongoDB

MongoDB is a NoSQL database that extends its core offering with the Atlas Vector Search service. Because vector data lives alongside your MongoDB documents, you get the same enterprise-grade security, backups, and access controls across your vectors and operational data without managing a separate system.

Atlas Vector Search is based on the HNSW algorithm for approximate nearest neighbor search. It supports both ANN (approximate) queries for speed and exact nearest neighbor (ENN) queries, which provide full recall but with higher latency.

Vector search is integrated directly into MongoDB’s aggregation pipeline via the $vectorSearch stage. This allows you to combine vector similarity search with metadata filtering, full-text search, geospatial queries, and other aggregation operations in a single query, without needing separate systems or data synchronization.

Deployment options

For teams that want a fully managed option, MongoDB Atlas is the company’s cloud platform that handles infrastructure, scaling, backups, and failover.

If you prefer to manage your own infrastructure, vector search capabilities are available in MongoDB Community Edition (free) and MongoDB Enterprise Advanced (paid). However, support for vector search in self-managed deployments has historically lagged behind Atlas and may be more limited depending on the version and configuration.

As a result, Atlas is generally the recommended option for production use, as it provides the most complete, stable, and fully supported implementation of vector search.

Scalability

Atlas Vector Search allows you to scale vector search workloads independently from your transactional database by using dedicated Search Nodes. This separation helps ensure that vector queries do not compete with operational workloads for resources. You can scale throughput by adding more Search Nodes or upgrading to higher-tier nodes with greater processing capacity. Moving to dedicated Search Nodes has been shown to significantly reduce query latency for complex queries. MongoDB has seen a 40 to 60 percent decrease in query time for many complex queries after making that switch.

Atlas clusters also support sharding, which distributes data across multiple nodes. However, it is primarily designed for managing operational workloads rather than serving as the main scaling mechanism.

Performance

Based on MongoDB's own internal benchmarks, Atlas Vector Search retains 90 to 95 percent accuracy with under 50ms query latency at large scale when quantization is configured—a strong result that puts it in the same range as purpose-built vector databases for mid-scale workloads.

At a very large scale, especially for pure vector workloads, purpose-built vector databases like Milvus or Pinecone are typically better optimized for high throughput and distributed retrieval. However, Atlas Vector Search remains a strong option for mid-scale workloads where tight integration with operational data is more important than achieving maximum vector search performance.

Integrations

Since Atlas Vector Search is part of MongoDB Atlas, it works with a broad ecosystem of AI frameworks. These include open-source tools like LangChain4j, LlamaIndex, Haystack, Spring AI, and Microsoft Semantic Kernel, as well as enterprise platforms such as Amazon Bedrock and Google Vertex AI, which can be used alongside MongoDB for embedding generation and model inference.

For teams working with real-time data, Atlas Vector Search integrates with Confluent Cloud via the MongoDB Kafka Connector. This allows data streams from across your systems to flow continuously into MongoDB and remain searchable as your data changes. Embeddings can then be generated as part of your application or Atlas workflows, enabling use cases such as live product recommendations or real-time Q&A systems.

Extensibility

Atlas Vector Search is a closed-source, fully managed platform, meaning you cannot modify the underlying search engine or introduce custom indexing algorithms. While MongoDB provides configuration options and rich filtering capabilities through its query language, the core search behavior and available features are limited to what the platform supports out of the box.

Pricing

MongoDB Atlas Vector Search is not priced as a standalone product and is included as part of the Atlas platform, so costs depend on the cluster tier you deploy.

Atlas offers three tiers: a free forever tier at $0/hour suitable for testing the platform, a Flex tier starting at $0.011/hour for prototyping and development, and dedicated clusters starting at $0.08/hour, ideal for production workloads and scaling up.

What actually differentiates Atlas Vector Search

Native integration with MongoDB: Runs directly within MongoDB Atlas, allowing you to combine vector search with document queries, filters, aggregations, and other database operations in a single pipeline

Unified data model: Stores vectors alongside JSON documents, so embeddings, metadata, and operational data live in the same system without additional infrastructure

Flexible query composition: Uses the aggregation pipeline to combine semantic search with full-text, geospatial queries, and graph lookups in one request

Managed, production-ready platform: Fully managed in Atlas with built-in scaling, security, backups, and high availability, making it easy to deploy and operate at scale

Ecosystem and tooling: Benefits from MongoDB’s mature ecosystem, including drivers, integrations, and developer tooling, reducing friction for teams already using MongoDB

Facebook AI Similarity Search (FAISS): Best for research and custom pipelines

FAISS—written in C++ with Python bindings—is an open-source vector-search library developed by Meta’s FAIR team for efficient similarity search and clustering of dense vectors. Unlike other options we’ve reviewed, FAISS is not a database: it does not provide a server, API layer, authentication, or built-in data management.

As a library, you import it into your application, build and query indexes directly (typically in memory), and handle everything else yourself—including persistence, updates, and serving queries over a network.

We included it because of its popularity—especially in research and performance-critical systems—and because it has influenced or is used within vector engines like Milvus. It’s a strong option if you want to skip the database layer entirely and work directly with a high-performance indexing library. The tradeoff is additional engineering effort in exchange for speed and full control.”

Rather than relying on a single indexing approach, FAISS is a full similarity search toolkit with over 20 index types across four main families: flat, graph-based, IVF, and quantization-based. This gives you a level of low-level control that’s hard to find or match.

What makes FAISS particularly powerful is that these index types can be mixed and chained. For example, you can combine IVF clustering with PQ compression to get fast approximate search at a fraction of the memory cost, or use HNSW as a coarse quantizer inside an IVF index for better cluster assignment at scale. You choose exactly how your vectors are indexed, queried, and compressed, and tune every parameter yourself.

The tradeoff is that FAISS has no native metadata filtering, no hybrid search, and no support for storing or querying structured data alongside vectors, meaning anything beyond similarity search must be built on top of it.

Deployment options

FAISS is a library, not a server or service, so “deployment” means something different here than it does for the databases in this article. There is no standalone system to configure or host—instead, you install the library and use it directly within your application.

You import it into your code, build and query indexes (typically in memory), and run everything within your application process. This means FAISS runs wherever your application runs: on a laptop, a cloud VM, an on-premises server, or a GPU-enabled cluster.

Scalability

FAISS can handle billion-scale workloads as it was designed for large-scale similarity search at Meta. However, scaling it is very different from scaling a full database. FAISS does not provide built-in clustering, replication, or automatic sharding, so once your dataset outgrows a single machine, you need to build that infrastructure yourself.

The most common approach is manual sharding:

- splitting the dataset across multiple machines,

- running a FAISS index on each shard,

- issuing queries in parallel, and

- merging the results at the application level.

FAISS also provides tools and examples for multi-node and distributed setups, but these are closer to research utilities than production-ready systems. They lack features such as coordination, fault tolerance, and system-level guarantees that are typically expected from a distributed database.

Performance

FAISS is one of the fastest options available for raw vector search, and in many benchmarks, it can outperform full vector databases like Milvus on pure similarity search tasks, especially when GPU acceleration is used. Because it does not include layers such as storage management, replication, or query orchestration, more of the system’s resources can be dedicated directly to search.

At a larger scale, however, things become more complex. Building indexes on very large datasets can take significant time—sometimes hours or days, depending on the data size and hardware—and as datasets grow, tuning search parameters becomes an ongoing tradeoff between speed and accuracy.

Unlike full vector databases such as Qdrant or Pinecone, which automate many of these optimizations, FAISS requires continuous manual tuning and engineering effort to maintain performance.

Integrations

FAISS does not provide its own ecosystem of connectors. Instead, it is typically used through higher-level frameworks built on top of it. For example, LangChain includes a FAISS integration that wraps it as a vector store, allowing it to be used in retrieval chains, RAG pipelines, and agent workflows. LlamaIndex also supports FAISS as a vector store backend, making it easy to use within existing pipelines built on that framework.

Extensibility

You could argue that FAISS is one of the most extensible options, since, as a library, it has no server layer or abstraction hiding its internals. You can modify its codebase and embed it directly into your own systems to suit your needs.

The core library is implemented in C++ and can be used as a building block for larger systems, although it does rely on supporting libraries such as BLAS and optional GPU dependencies. This is how some vector database systems have incorporated FAISS components into their architectures, building additional infrastructure around it. With sufficient C++ expertise, you can extend FAISS by modifying existing indexes or implementing new algorithms, but this requires working directly with its internal code rather than simply configuring it.

Pricing

FAISS is completely free and open-source under the MIT license, with Meta developing and maintaining it. There are no paid tiers or enterprise plans. However, using FAISS doesn’t equal zero expenses, as you must consider the engineering time and infrastructure costs required to use it in production.

With a software engineering background, Nefe demystifies technology-specific topics—such as web development, cloud computing, and data science—for readers of all levels.

Want to write an article for our blog? Read our requirements and guidelines to become a contributor.