Testing and evaluating AI models is one of the most critical steps in building reliable AI systems.

A poorly tested model can block legitimate transactions, discriminate against certain groups of users, make flawed medical recommendations, or quietly degrade for weeks before anyone notices. The consequences range from displeased customers to serious legal and reputational risk.

In this article, we draw on our experience to walk you through what that process looks like in practice, from validating your data before training begins, to choosing metrics that reflect real business value, to monitoring models once they are live in production.

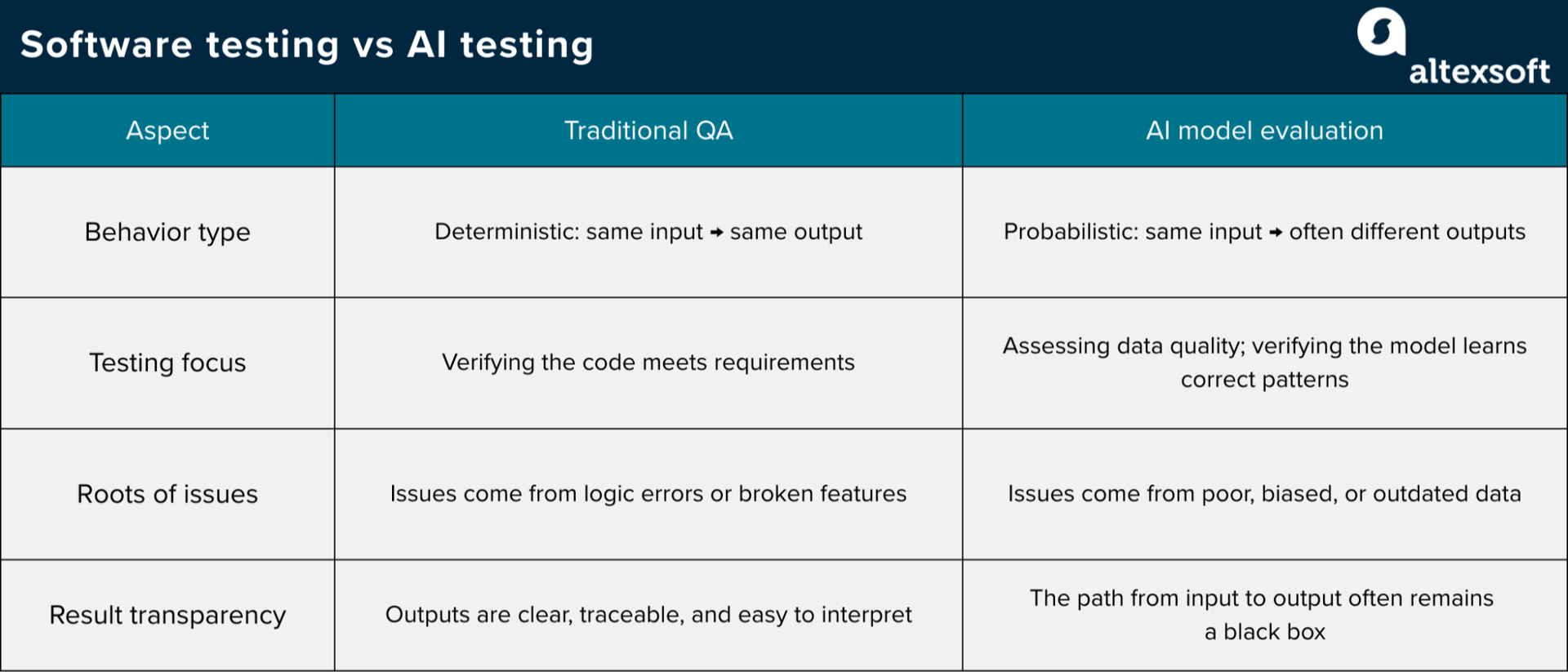

How testing and evaluating AI models differ from traditional QA

While traditional QA engineering deals with deterministic software, where the system follows fixed rules written by developers, AI models are probabilistic, meaning their behavior is learned from data rather than hard-coded rules.

Mariia Khlistyk, AI Engineer and Data Scientist at AltexSoft, explains how this changes the approach to testing: “With traditional software, you write code that says ‘if X happens, do Y’—it's predictable, repeatable. Give it the same input and you'll get the same output every time. But with AI models, you might get slightly different outputs even with identical inputs.”

The focus also changes. “In traditional QA, you're verifying that the code does what the requirements say. With AI, you’re constantly asking if the data quality is holding up and if the model is still learning the right patterns,” says Mariia. “You end up caring a lot more about statistical metrics like precision, recall, and F1 scores, which measure different aspects of how well a model identifies the right outcomes—rather than relying simple pass/fail tests.”

Another key difference is that AI model quality depends heavily on data. In traditional QA, most issues come from logic errors or broken features, while many failures in AI systems come from missing cases, biased samples, or data that no longer reflects reality.

Also, while traditional software testing is straightforward and traceable, AI models can develop a black-box problem where it's unclear how they arrive at their outputs. Mariia notes, “Many AI models, especially deep learning ones, aren’t transparent about how they make decisions. You need specialized testing for explainability and fairness. You're essentially testing whether the model has learned biases or problematic patterns from the data.”

Validating data quality before model training

AI models absorb the characteristics of their training data—including its flaws. Poor data quality compounds through every stage of the machine learning pipeline and doesn't just affect one feature or function.

“Think of QA's role here as being the guardian of data quality because everything downstream depends on it. A small labeling error in training data can become a systematic blind spot in production. The model is learning patterns from whatever you feed it, including the garbage,” says Mariia.

Data QA goes beyond simply checking for missing values or formatting errors. The goal is to verify that datasets are accurate, complete, consistent, and reflect the real-world conditions the model will face in production.

Effective machine learning all begins with proper data preparation

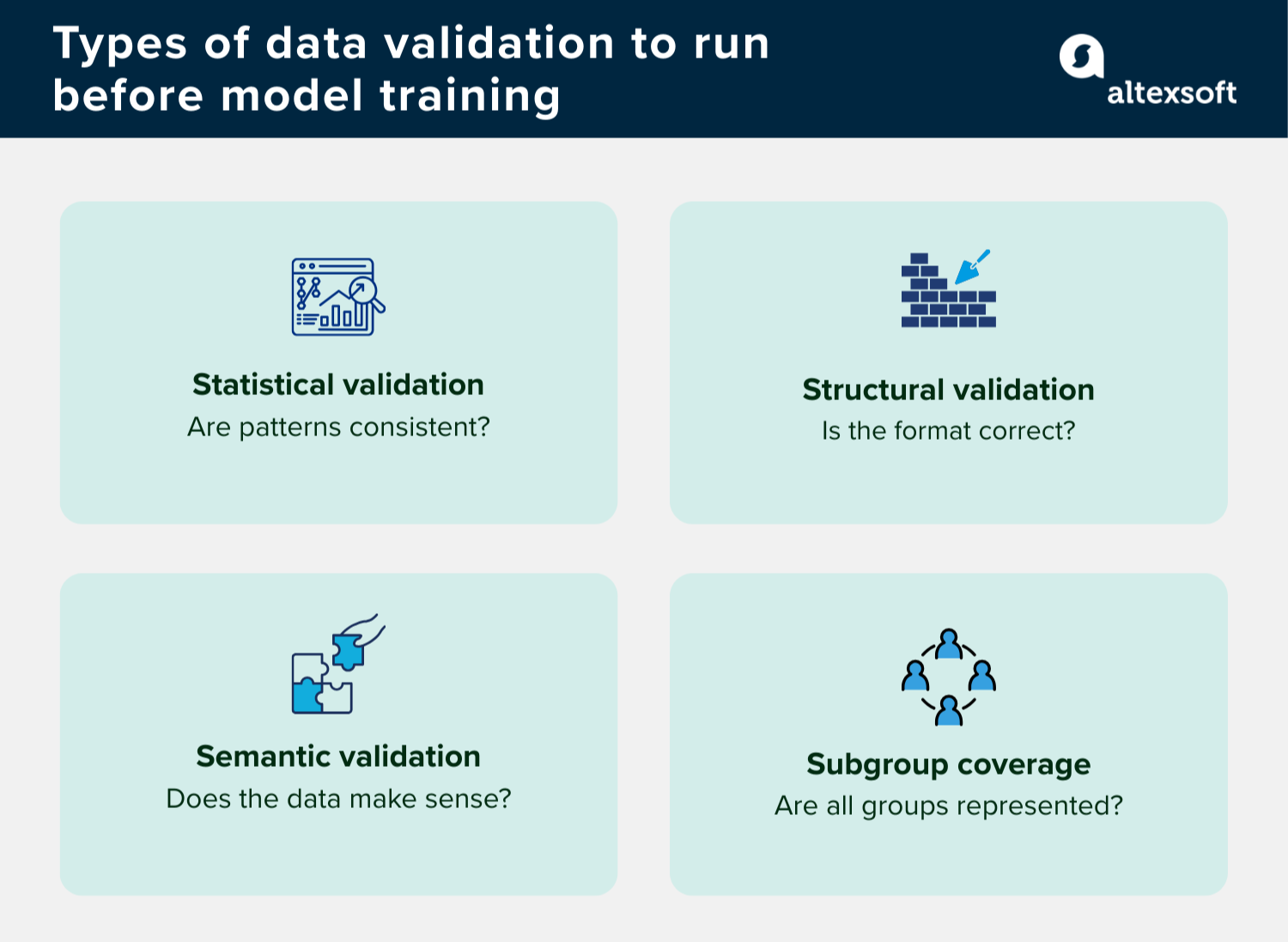

Data validation

Validating data integrity before training can take several forms.

Statistical validation checks whether your data aligns with expected patterns. If a feature that typically ranges between 0 and 100 suddenly jumps into the thousands, that is a clear signal to investigate.

Structural validation ensures the data is properly formatted. It confirms that the correct data types appear in the correct fields, that required columns are not empty, and that there are no duplicate records.

Semantic validation verifies that the data follows logical rules. For example, a purchase cannot be timestamped before the order is placed and a person’s age cannot be negative.

Coverage across subgroups—segments within your data, such as age ranges and geographic regions—is another area you have to pay attention to. If a model is being built to serve all users, but the training data is heavily concentrated on one segment, the model will reflect that imbalance.

Much of this process can be automated. As Mariia explains, “Tools like Great Expectations, TensorFlow Data Validation, or Pandera let you define expectations once and then automatically validate every new batch of data. You create what I call data quality scorecards that run at different levels—individual features, whole records, and the entire dataset.”

Data pipeline testing

Raw data doesn't arrive ready for model training. Instead, it travels through a sequence of transformations in a data pipeline—getting cleaned, formatted, and enriched. Each stage is a potential point of failure and needs to be tested.

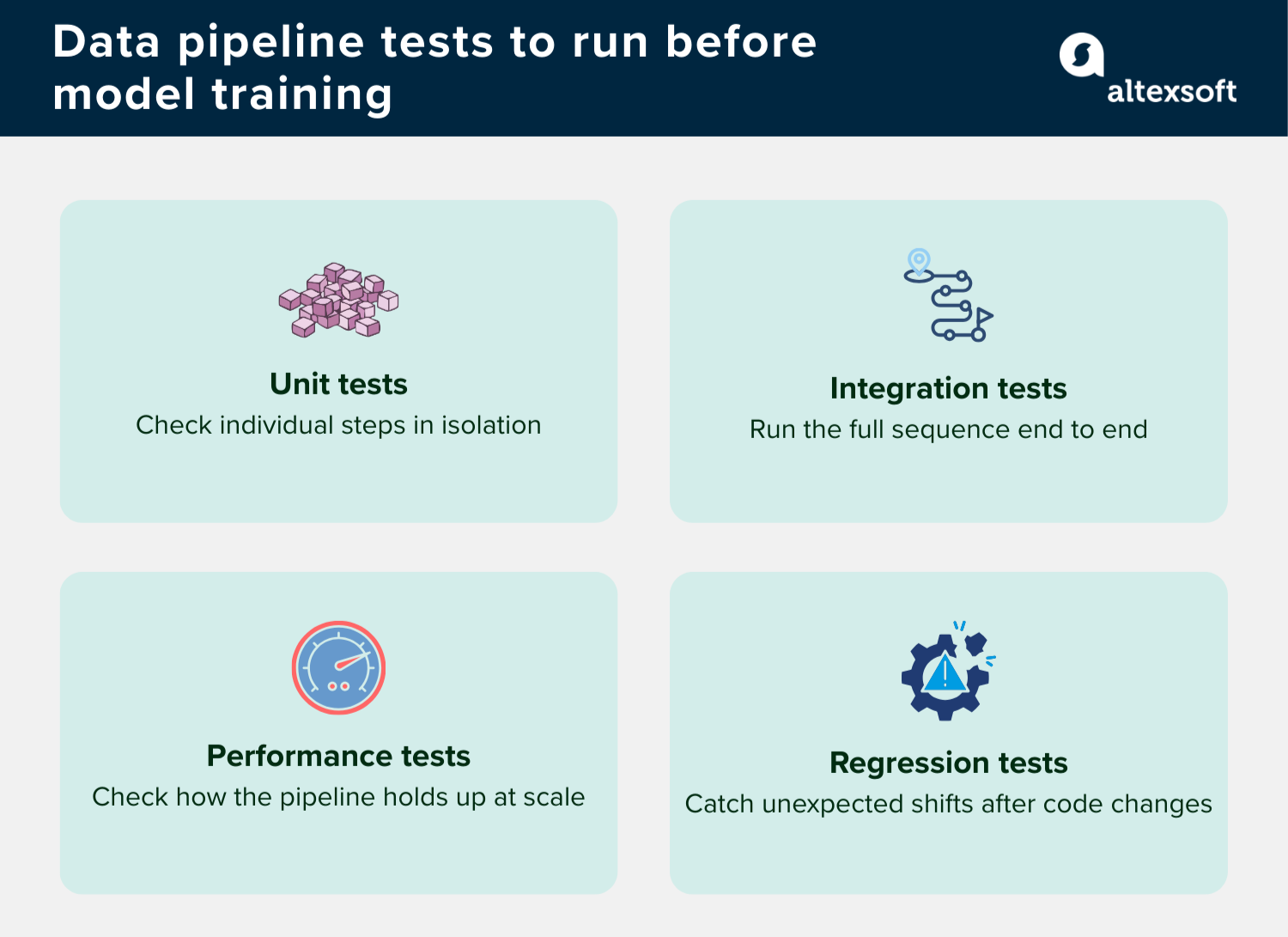

The types of data pipeline tests you can perform include

- unit tests that check individual steps in isolation, for example, verifying that a function designed to clean text data is actually removing what it should;

- integration tests that run the entire sequence from start to finish, catching issues that only appear when all the steps run together;

- performance tests that examine how the pipeline holds up when data volumes spike—does it slow down significantly or start consuming more computing resources than expected?; and

- regression tests that verify whether changes to the pipeline do not cause unexpected shifts in the output data, catching silent errors before they affect model training.

Beyond these tests, Mariia points out that data quality gates are equally important. “These are automated checks that actually fail the pipeline if quality thresholds are breached. For example, you may set a rule that says ‘Fail if more than 5% of values are null’ or ‘Fail if the data starts drifting significantly from what the model was trained on.’ You can configure these tests with different severity levels—warnings for minor issues and blocking errors for serious ones.”

Because good monitoring is also crucial, at each stage of the data pipeline, you need to track health metrics like success rate, processing time per step, and data quality scores such as null rates and distribution consistency. Set up alerts for failures or anomalies and log everything so you can debug issues when they occur.

How to test and evaluate models during training

Once your data is validated and your pipeline is tested, the focus shifts to the model itself. Training a model is an iterative process that requires ongoing evaluation to ensure it's actually learning useful patterns rather than just memorizing the data it's seen.

Let’s explore the activities involved at this stage.

Watch out for data leakage

Data leakage happens when information that should not be available to the model during training accidentally enters the dataset. As a result, the model learns signals that make it appear highly accurate during evaluation, even though those signals would not exist in real-world use.

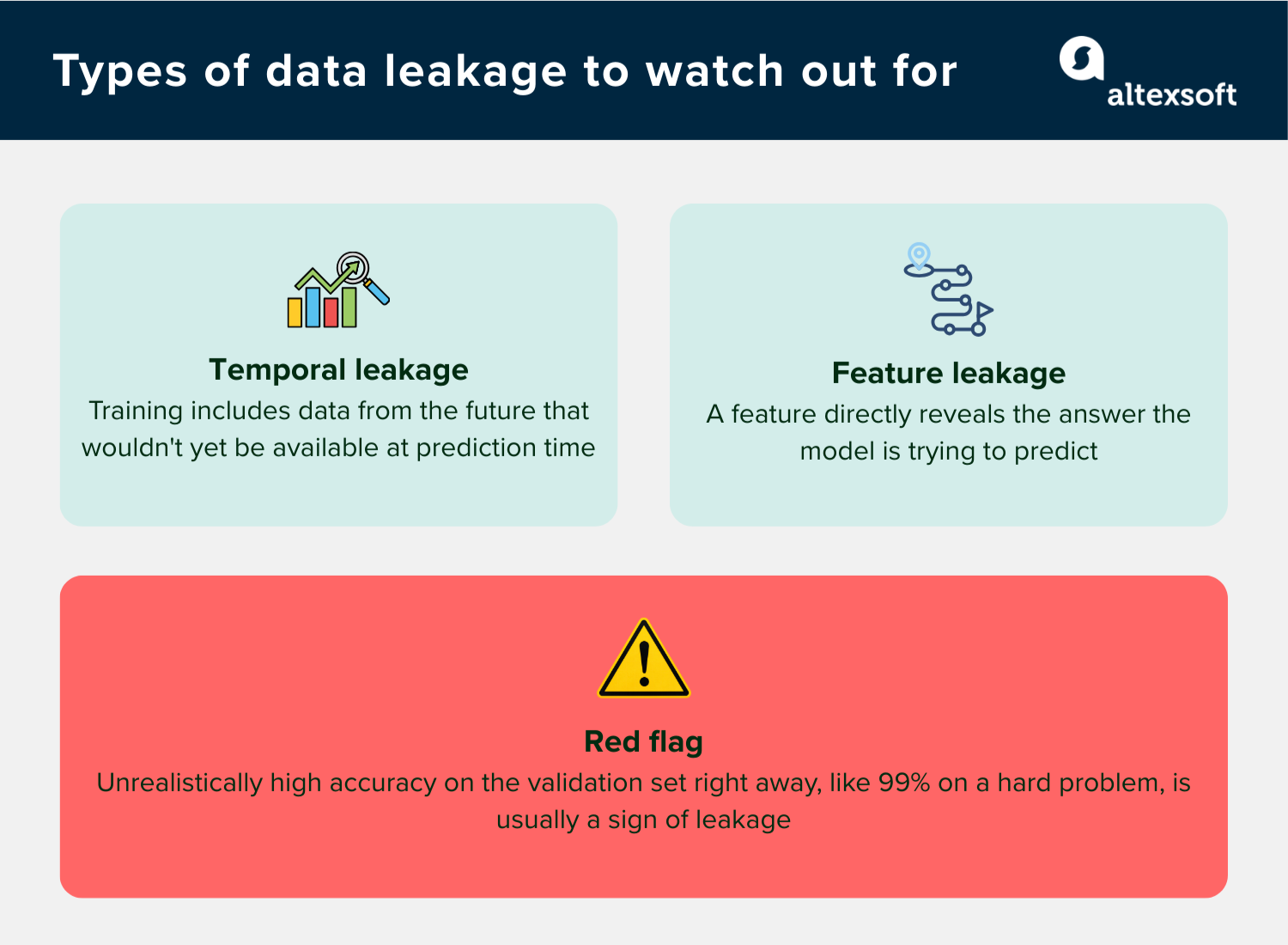

The types of data leakage to watch out for include

- temporal leakage, when the training dataset includes information from the future relevant to the prediction point. For example, if you're forecasting customer churn in January and the dataset contains user activity from February or later, the model is learning from patterns that wouldn’t be available at prediction time; and

- feature leakage, when a feature directly or indirectly reveals the answer the model is trying to predict. If you want a model to assess whether a student will pass a course and include their final exam score as a feature, the model can effectively “cheat,” since that score already determines the outcome.

One red flag to watch out for is a model that achieves unrealistically high accuracy on the validation set from the start. As Mariia puts it, “If it’s like 99% accuracy on a hard problem, you must investigate because that's usually a sign of leakage.”

Running correlation analysis, which measures how closely each input feature relates to the outcome you want to predict, can also help detect leakage. If a feature shows an almost perfect correlation with the outcome, it usually means the model is getting information it shouldn’t have. For example, in a loan decision model, a feature like “approved loan amount” might correlate almost perfectly with the final decision because that value is determined only after the loan has already been granted.

Choose metrics that reflect actual business value

Metrics are crucial when testing and evaluating models during training because they tell you how well your model is actually learning patterns that matter. Choosing the right metrics requires input from business stakeholders, not just data scientists. “The best technical metrics don't matter if they don't align with actual business value,” says Mariia.

Take fraud detection, for example: While optimizing for overall accuracy sounds reasonable, if 99% of transactions are legitimate, a model that flags nothing as fraud still achieves 99% accuracy. What actually matters here is

- precision, which tells you how often the model is right when it does flag something as fraud, and

- recall, which tells you how much of the actual fraud the model is catching.

Mariia also cautions against optimizing for a single metric. “There’s this principle called Goodhart’s Law: When a measure becomes a target, it ceases to be a good measure. If you optimize only for one metric, you’ll often get unexpected bad behavior in others.”

It’s better to use multiple complementary metrics to get a full picture. Precision and recall are important, but you can also consider

- ROC-AUC (Receiver Operating Characteristic - Area Under Curve), which shows how well the model separates positive cases from negative ones across different levels of strictness in its predictions;

- PR-AUC (Precision-Recall AUC), which summarizes how well the model balances true positives and false alarms. It is particularly useful when the dataset is highly imbalanced and the positive outcome is rare, such as fraud detection where fraudulent transactions are far less common than legitimate ones.; and

- MAE (Mean Absolute Error) and RMSE (Root Mean Squared Error), which are used when the model predicts a numeric value rather than a category, such as house prices or sales figures. Both measure how far off the model’s predictions are on average, but RMSE gives more weight to larger errors.

Besides selecting the right metrics, it’s important to check whether improvements are actually meaningful. “A jump from 84% to 85% accuracy might look good, but is it really valuable for the business or just within normal variation? Sometimes a 1% improvement can be huge, while a 5% improvement might not matter much—it depends on the context,” says Mariia.

Test whether the model is actually generalizing

A model that performs well on training data but poorly on new data has likely overfit the training data, meaning it has memorized the examples it saw instead of learning patterns that generalize to unseen data. Here are some ways to check for model generalization.

- Cross-validation, where you split your data into multiple pieces, train the model on some and test it on others, then average the results. This gives you a more reliable picture of how the model will perform on data it has never seen.

- Learning curves, where you plot model performance against the size of the training data. If both training and validation performance are poor, the model needs improvement. If training performance is strong but validation performance lags behind, the model is overfitting.

- Ablation studies, where you remove features or model components one at a time to measure their impact. If removing something does not hurt performance, it is not contributing anything useful.

- Error analysis, where you look closely at the examples the model gets wrong and ask whether there is a pattern. As Mariia puts it, “Maybe it fails on a specific customer segment, or when certain features have extreme values, or when data is missing.” Those patterns point directly to what needs fixing.

- Subgroup performance checks, where you break down metrics by important segments such as age groups, regions, or product categories. A model can look strong overall but perform poorly for a specific group because of bias.

- Hold-out validation, where you keep a completely separate test set that is used only for final evaluation. If performance on this set differs significantly from validation results, it may indicate overfitting, data leakage, or issues in the evaluation setup.

Beyond running these checks, you also need to know how to interpret the results. Large or erratic swings in validation performance can point to data quality issues, while steady improvement suggests that the model is learning meaningful patterns. It is also important to compare your results against a simple baseline before deciding that a complex model is justified. As Mariia explains, “If my complex neural network gets 87 percent accuracy but a simple logistic regression gets 85 percent, is the 2-point gain worth the added complexity, longer training time, and reduced interpretability? Sometimes yes, often no.”

Monitoring AI models in production

Your model has passed all in-training checks, and the evaluation looks solid, so we can call it a day, right? Not quite. A new phase of evaluation starts once you deploy a model.

The conditions a model was trained on rarely stay the same forever. User behavior shifts, data sources change, and the world the model learned from gradually stops looking like the world it is operating in. Without production monitoring, these changes go unnoticed until the model starts failing.

Types of failures to watch for in production

Just as there are specific failure patterns to watch for during training, production has its own set of issues that can quietly undermine a model's performance. Some production failures are obvious, while others are silent, quietly degrading the model.

Here are some post-deployment errors that can compromise model performance:

- data drift, which happens when the inputs the model receives start looking different from what it was trained on;

- prediction drift, where the distribution of the model's outputs starts shifting in unexpected ways;

- concept drift, which happens when the real-world patterns the model learned during training no longer hold true;

- label drift, which occurs because the definition of what counts as a correct answer changes, making it hard to tell whether a shift in performance reflects a model problem or a change in how outcomes are being measured; and

- data pipeline failures, where something in the data supply chain breaks and the model stops receiving the data it needs to make predictions.

Not all of these will trigger an obvious alert. Some will only show up as a slow, gradual decline that's easy to miss if no one is actively watching, hence our next point on monitoring.

Set up a continuous monitoring infrastructure

How do you monitor for these and other types of failures? By setting up a continuous monitoring infrastructure that gives your team full visibility into how the model is behaving in the real world. As Mariia explains, “I deploy dashboards that track the model performance, data quality issues, system health, key metrics, and everything else in real-time. I set up automated alerting so we're notified immediately if something goes wrong, not three weeks later.”

One challenge that’s unique to production monitoring is that you typically can’t measure a model’s performance directly, because you don’t always know right away whether a prediction was correct. During training, every example has a label—the correct answer the model is supposed to learn. But in production, the actual results often arrive much later or not at all. For example, a fraud detection model’s prediction may not be confirmed until weeks later when chargebacks are processed. Also, with a recommendation system, you might never know if the item you didn't recommend would have been perfect.

One way to navigate this issue is by comparing online validation metrics, which are measured against live data in production, with offline metrics, which are collected during training. As Mariia advises, “If my offline performance score—the combined measure of precision and recall—was 0.85 during training but drops to 0.70 in production, something's wrong. This could be training-serving skew, data drift, or problems with my evaluation setup.”

Model versioning is also important because it provides a clear record of which version of the model was serving predictions at any given time. This makes it possible to trace performance changes back to specific deployments, compare versions through A/B testing, and roll back quickly if something goes wrong.

Another aspect people often forget to monitor is cost, but it can be just as critical as checking for failures or performance issues. “I've seen models that worked great technically but cost 10× more than expected, making them unsuitable for production,” Mariia notes. Setting up alerts for budget overruns helps track factors such as compute usage, storage costs for logs and features, and processing costs in data pipelines.

Build an alerting system

The moment something goes wrong in production, you want to know about it, and that’s where a well-built alerting system comes in. However, that doesn’t mean catching every single issue, even negligible ones, because according to Mariia, “Too many false alarms—and people start ignoring them, but too few—and you miss real problems. The goal is to find the right balance between sensitivity and noise.”

The most practical starting point is defining acceptable ranges for your key metrics. For example, you could decide that accuracy shouldn’t drop below 80% or precision shouldn’t fall under a certain threshold. From there, you can set up tiered alerts based on how serious the deviation is. As Mariia explains, “Use warnings for minor deviations that should be investigated but don’t require immediate action and critical alerts for severe issues that demand a rapid response. Warnings can be sent to a Slack channel that the team monitors during business hours, while critical alerts page the on-call engineer right away.”

Beyond the alert itself, what it contains matters just as much. Each alert should include useful context, such as

- what exactly went wrong,

- which segments or features are affected,

- the likely causes,

- steps to investigate, and

- links to relevant dashboards and runbooks.

If someone gets an alert and doesn't know what to do, it needs improvement. You can take things a step further by setting up automatic responses for business-critical systems. This could mean rolling back to a previous model version or shifting traffic away from a failing deployment. However, be careful, because automated systems must be thoroughly tested before you trust them to act on their own.

How often should you retrain your production model?

Models will need to be retrained at some point because the data they were trained on eventually stops reflecting the reality they are operating in. There is no universal answer to how often this should happen, as the right frequency depends on how quickly the environment the model operates in changes.

- Fast-moving domains need frequent retraining, sometimes daily or weekly. Fraud detection, for instance, needs to keep up with constantly evolving tactics used by bad actors.

- Moderate domains do well with weekly to monthly retraining. A customer churn prediction model, for example, can tolerate a slightly longer cycle since customer behavior shifts gradually rather than overnight.

- Slow-moving domains can be retrained monthly to quarterly. A real estate valuation model is a good example, because, on average, property market trends tend to change slowly and predictably.

An important distinction Mariia draws is between evaluation and retraining. “You should evaluate more frequently than you retrain. Continuous monitoring means you're performing real-time, daily checks on metrics and drift. However, retraining is more expensive and disruptive, so you do it less frequently.”

Also, it's better to follow a triggered retraining pattern than a fixed schedule. Set up automatic retraining when drift crosses a threshold, performance drops below an acceptable level, or you’ve accumulated enough new labeled data. This way, the model is updated when it actually needs to be, not just because a calendar date says so.

Whatever approach you land on, it’s worth weighing the cost of retraining against the risk of leaving the model unchanged. As Mariia puts it, “Retraining requires compute resources and engineering time. Someone needs to validate the new model, compare it to the old one, test it, and deploy it.” However, leaving a degrading model in production carries its own consequences, including missed opportunities and a poorer user experience. Sometimes a monthly retrain is overkill and wastes resources; other times, weekly retraining isn’t frequent enough, and the model may already be underperforming by the time you get to it.

Common AI testing and evaluation mistakes teams make (and how to avoid them)

Even teams with strong technical skills make avoidable mistakes when testing and evaluating AI models. Here are some common ones.

Using the wrong metrics. Choosing metrics because they are standard or easy to compute, rather than because they reflect the actual problem, will lead you in the wrong direction.

Stopping evaluation at deployment. Many teams do thorough validation during development, deploy the model, and then stop, only to discover that the model has been failing silently.

Skipping baselines. Jumping straight to complex models without first testing simple ones means you have no way of knowing whether the added complexity is actually worth it. A simple model that gets 85% accuracy might perform almost as well as a complex one that gets 87%—and will be far easier to maintain and explain.

Training-serving skew. Some teams implement feature engineering one way in their training environment and another in production. The model ends up being served data that looks different from what it was trained on, causing silent performance degradation that is difficult to trace.

Inadequate edge case testing. Many teams tend to test on the most common scenarios and call it done. However, production environments are full of rare situations—extreme values, missing data, unusual input combinations—that never appear in the main test set, which can cause unexpected failures.

Skipping shadow mode. Running a new model in parallel with the existing one before switching traffic helps catch integration issues and edge cases that only appear with real users. Skipping this step to save time often leads to bigger problems later.

Making model evaluation understandable to stakeholders

Building and maintaining AI models requires significant investment and, for that investment to be approved and sustained, product managers and CTOs, as well as nontechnical stakeholders, must understand what they are paying for. However, most of them aren’t data scientists, so translating model performance into language that connects to business outcomes is important.

Here are some things to keep in mind to properly communicate the value of testing and evaluating AI models to these groups.

Models degrade over time and that is normal. A model that performed well six months ago may produce poor results today, even though nothing in the code has changed. This is why it's important to budget for the costs of continuous monitoring and retraining. As Mariia puts it, “Underinvesting in ML infrastructure is like buying a car but skipping maintenance. It might work for a while, but eventually something breaks, and fixing it costs way more than maintaining it would have.”

Performance is not a single number. There is no single score that tells you whether a model is good because different metrics capture different things, and they often pull in opposite directions. Understanding these trade-offs helps stakeholders set realistic expectations.

Translate metrics into business language. Raw metrics mean very little to most stakeholders, especially nontechnical ones. What they need is these metrics translated into business outcomes. Mariia points out, “Instead of saying F1 score improved from 0.82 to 0.87, I say that we reduced false fraud alerts by 15%, which means 1,000 fewer customers per day got their cards incorrectly blocked.”

Show trends, not just snapshots. A single performance number at a point in time does not tell the full story. Showing how performance has changed over weeks or months gives stakeholders a much clearer picture of whether the model is stable, improving, or quietly declining.

Data quality is a business investment, not a technical detail. More data does not automatically mean a better model because quality matters more than quantity. As Mariia says, “Biased data leads to biased models and fixing data problems early is way cheaper than patching model outputs later.” Investing in data quality pays off across the entire system.

Tradeoffs are unavoidable in ML. Every AI model involves tradeoffs, and stakeholders have to embrace this reality. You can’t have a perfectly accurate model with high interpretability nor can you have maximum accuracy and perfect interpretability. “Understanding these tradeoffs helps stakeholders make informed decisions about what they actually need versus nice-to-haves,” says Mariia.

Provide recommendations, not just data. For example, don't just report that drift was detected. Explain what it means, what the options are, and any recommendations. Stakeholders need enough context to make decisions, not just a report that leaves them with more questions than answers.

With a software engineering background, Nefe demystifies technology-specific topics—such as web development, cloud computing, and data science—for readers of all levels.

Want to write an article for our blog? Read our requirements and guidelines to become a contributor.