Insurance underwriting (the process of evaluating risk and determining policy pricing) has traditionally been driven by rules and expert judgment, but is increasingly augmented by AI.

This article explores how AI is transforming core underwriting tasks, including risk assessment, pricing, and decision support. It also examines key challenges insurers face when adopting these technologies.

What is AI in underwriting?

Artificial intelligence in underwriting refers to the use of advanced algorithms and data-driven models to evaluate risk, assess applications, and support or automate decision-making in insurance. AI enables underwriters to process large volumes of data quickly and generate more precise, consistent risk assessments.

Several AI technologies work together to power underwriting platforms and tools.

Predictive analytics models. Machine learning models analyze historical (claims history, customer profiles, credit scores, etc), and real-time data (GPS tracking and telematics in auto insurance, data from wearables in health insurance, or weather data in property insurance) to identify patterns and predict future outcomes. They can estimate claim probability, detect anomalies, and support more accurate pricing and underwriting decisions.

Natural language processing (NLP). NLP enables the transformation of raw, unstructured text into structured representations suitable for actuarial modeling. Underwriters traditionally spend a disproportionate amount of time reading and organizing documents—broker emails, medical records, financial statements, and engineering reports. Natural language processing can identify specific risk factors almost instantly in internal policy handbooks or vast medical databases.

Beyond mere data extraction, NLP enables the analysis of customer sentiment and behavioral cues embedded in communications. In health and life insurance, sentiment analysis can reveal psychological indicators or lifestyle patterns from physician notes that correlate with mortality or morbidity risks.

Computer vision (CV). In the property and casualty sector, computer vision enables insurers to assess physical risks without on-site inspections. By using high-resolution aerial imagery from satellites, drones, and fixed-wing aircraft, computer vision models can identify property characteristics and damage patterns at scales previously impossible. Vision models are trained to identify specific risk-related features, such as roof damage or proximity and density of trees.

LLMs and foundational models. Modern multimodal LLMs can do what CV and NLP models do, but better: they process text, audio, video, and images by converting all types of unstructured data into a universal mathematical representation called embedding vectors. Besides, they can synthesize information from various documents, explain actuarial rules, and generate structured justifications for decisions.

Common applications include

- automated summarization of medical, engineering, and financial records,

- generation of risk memos and regulatory notices, and

- internal knowledge assistants that retrieve and reference policy guidelines.

General-purpose models, such as GPT or Gemini, give way to specialized insurance LLMs (Domain-Specific Language Models) trained on industry-specific glossaries and regulations. Specialized AI agents outperform flagship general-purpose models in underwriting data-extraction tasks: Roots AI claims their systems achieve 93 percent accuracy, compared to 80 percent for GPT-5.0 and 84 percent for Gemini 3.0 Pro. Using such “reasoning engines” reduces the average time to decision for standard policies to 12 minutes.

The newest trend in underwriting is deploying enterprise-grade foundational models (FMs) adapted to regulatory and operational requirements. An FM may include

- a multimodal core model,

- domain-specific fine-tuning (insurance regulations, actuarial logic),

- reasoning frameworks, and

- integration with internal data sources and decision engines.

These systems improve performance on complex legal and underwriting tasks. While a multimodal LLM alone can analyze a property image and summarize a report, a foundational model system can

- link visual evidence to underwriting rules (e.g., detect roof condition → map to risk category → adjust premium logic);

- apply consistent reasoning across modalities and documents, not just describe them; and

- operate within regulatory constraints.

To learn more about AI in insurance (not only underwriting, but also sales, customer service, and distribution), read our dedicated article or watch the video below.

AI in insurance

Typical use cases: where AI helps

Here are the main use cases of AI in underwriting.

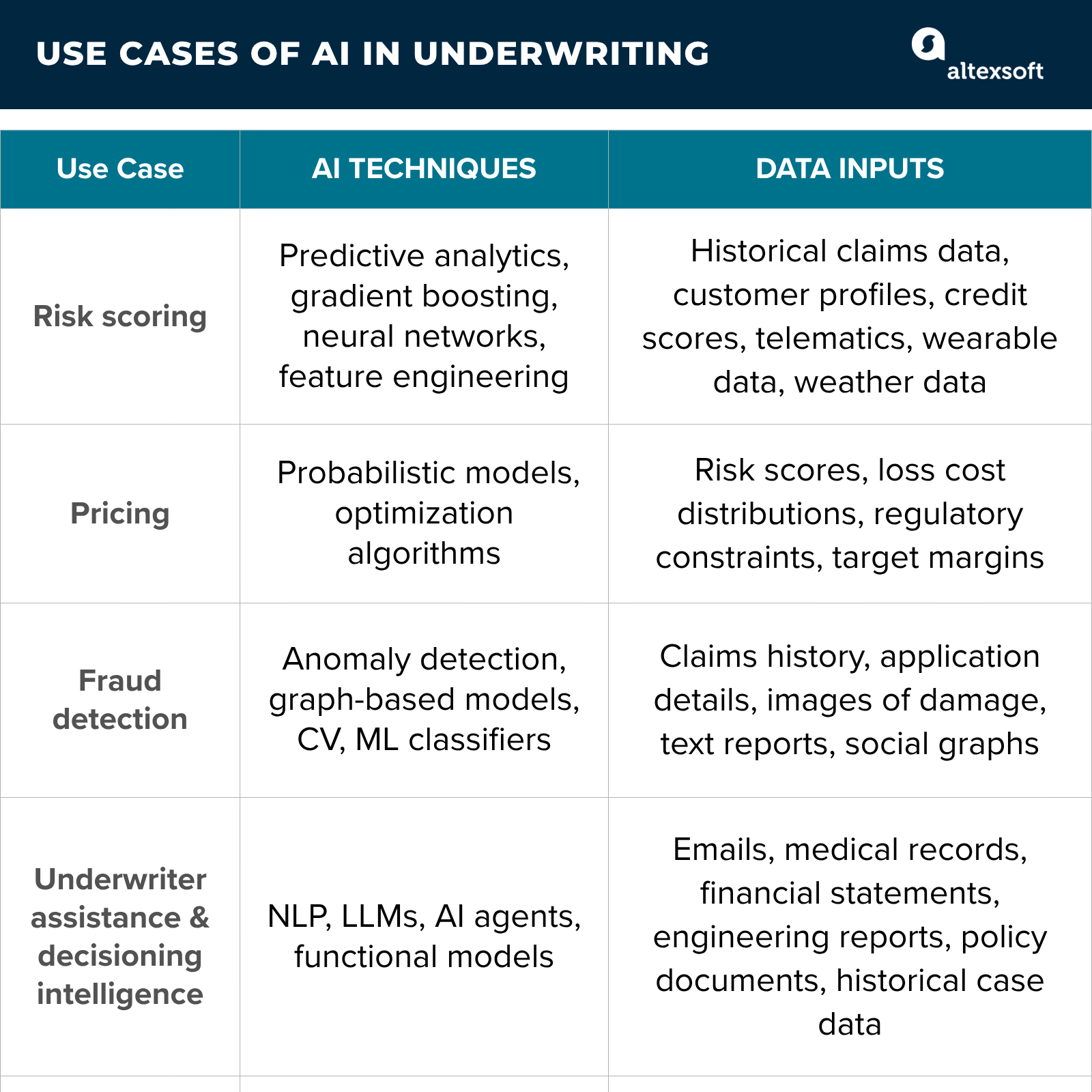

Key use cases and data inputs for AI in underwriting

Risk scoring

AI models generate risk scores for individual policies by integrating multiple data sources into a unified feature space. These scores reflect the estimated probability and severity of loss. Feature engineering and model selection (e.g., gradient boosting, neural networks) are tailored to specific lines of business, enabling segmentation beyond standard rating categories.

Recent analysis shows that insurance companies using AI-driven underwriting have reduced loss ratios by an average of 18.5 percent compared to traditional methods. This is largely because AI can spot subtle risk patterns and correlations that human underwriters often miss.

Pricing

Models output loss cost distributions or point estimates that feed into pricing engines, alongside business constraints such as target margins, regulatory requirements, and portfolio balance.

Fraud detection

AI systems flag potentially misrepresented or suspicious applications before policy issuance. This includes detecting inconsistencies across data fields, identifying anomalous attribute combinations, and cross-referencing external data sources.

Computer vision plays a critical role in maintaining the integrity of digital submissions. "Image provenance" techniques use AI to detect subtle inconsistencies in lighting, shadows, or textures that might suggest a photo has been manipulated. Mitigating fraud at the underwriting stage can decrease the final ratio.

Graph-based models may also be used to identify links between applicants, assets, and previously flagged entities.

Underwriter assistance and augmented decisioning

AI systems support decision-making by aggregating, structuring, and prioritizing relevant information within a submission. AI recommendation engines can propose risk classes, sub-limits, or referral decisions. Examples include AI chatbots to answer underwriters’ queries or systems that surface similar past cases (case-based reasoning). With the help of AI, underwriters can focus on exception handling, complex cases, and judgment-based decisions rather than routine processing.

Beyond direct financial savings, AI enhances the underwriting team's productivity. McKinsey reports suggest that domain-level AI rewiring can lead to a 10–20 percent improvement in sales conversion rates and a 20–40 percent reduction in the cost to onboard new customers.

AI tools for underwriting in insurance

The AI underwriting vendor landscape can be segmented by industry focus.

Insurance-focused Insurtechs

These vendors offer end-to-end solutions or modules (e.g., image analysis, portfolio optimization) tailored to insurer workflows. For example, ZestyAI’s wildfire/satellite models help reinsurers refine property insurance pricing.

Other insurtech startups targeting carriers: Federato (AI-native policy admin/underwriting), Planck (generative AI for commercial risk data), Cytora (risk digitization platform), Shift Technology (Agentic AI for insurance, including risk and fraud identification ), Tractable (computer vision for auto/property claims).

Decision intelligence platforms

While not underwriting tools themselves, these platforms enhance underwriting by supplying real-time, unified data-driven insights and decision support.

Cloverleaf Analytics provides a “Decision Intelligence” platform that automates data pipelines—from ingestion to cloud storage (e.g., Snowflake)—reducing data processing time from days to minutes and enabling faster underwriting decisions.

Aera Decision Cloud and Snowfire AI unify data across siloed departments into a single source of truth. Using machine learning, they deliver real-time, context-aware recommendations that support pricing and risk evaluation.

Enterprise platforms

Large platforms enable insurers to build in-house: Salesforce’s Financial Services Cloud includes AI components; AWS/Azure/GCP provide ML toolkits (SageMaker, Azure ML, Vertex AI) and prebuilt models that insurers leverage.

Custom development

Custom ML development can be a good solution when off-the-shelf doesn’t fit.

In this case, the insurer needs to build or hire a dedicated data science and engineering team capable of developing models using proprietary underwriting and claims data, as well as continuously monitoring, recalibrating, and improving model performance over time.

For example, Swiss Re (a world-leading reinsurance company) built proprietary Claims GenAI. And Daido Life, a major Japanese insurer, developed an AI-driven medical underwriting system that evaluates diverse health data—including medical history, lifestyle factors, and diagnostic records—to generate initial risk scores and propose tailored coverage recommendations.

Developing a custom model creates unique underwriting logic competitors can’t easily replicate.

Implementation roadmap

Implementing AI in underwriting requires a structured approach focused on technical readiness and human-centric design.

Foundation and data readiness

AI is only as good as its inputs, so the first step is to audit and clean siloed data from legacy systems to ensure high quality. Once the data is structured, the development team must set up secure, real-time pipelines from third-party sources—like credit bureaus or IoT devices—to move beyond limited historical datasets. Finally, building a unified customer data repository creates a "single source of truth," ensuring models operate on consistent information across the entire organization.

Use case prioritization

Automating routine tasks can free up to 70 percent of an underwriter's time, making high-impact areas such as automated submission ingestion or risk triage ideal starting points. By using NLP to extract data from broker PDFs or flagging simple cases for straight-through processing, firms can secure "quick wins." Success should be measured by quantifiable KPIs, such as a specific percentage reduction in manual data entry or a measurable improvement in loss ratios driven by more precise pricing.

Model development

Model development requires structured processes for training, testing, and validation. This includes selecting appropriate modeling techniques, defining evaluation metrics, and ensuring models generalize beyond training data. Validation also needs to account for regulatory and business constraints.

MLOps for deployment and monitoring

Operationalizing AI requires infrastructure for model deployment, versioning, and monitoring. MLOps practices enable continuous tracking of model performance, detection of drift, and controlled updates. This is essential for maintaining accuracy over time.

Scaling and governance

Scaling requires a complete rewire of the operating model, redefining the underwriter's role as a consultative, high-value risk advisor as administrative tasks disappear. Insurers must then establish an enterprise-wide governance framework to handle ongoing bias checks, drift detection, and formal human override protocols. Once a pilot succeeds in one line, such as personal auto, the organization can use those reusable AI components to scale vertically into more complex sectors, such as commercial property or life insurance.

Key pillars for success

Scaling AI in underwriting isn't just about better code—it’s about balancing high-speed automation with ironclad legal and ethical guardrails. Here is how to navigate the critical landscape of regulatory compliance and model integrity.

Regulatory and compliance

AI in underwriting is subject to increasing regulation. In the US, the Fair Credit Reporting Act (FCRA) when insurers use consumer reports (e.g., credit-based insurance scores). Models must provide actionable adverse action notices with specific reason codes if coverage or pricing is negatively affected. This effectively requires using explainable models that map to those reasons.

The EU AI Act (Regulation 2024/1689) classifies AI systems used in life and health insurance underwriting as high-risk, introducing strict requirements for their development and use.

Insurers must guarantee data quality and representativeness, maintain detailed documentation and audit trails, and implement transparency mechanisms that make model outputs interpretable. The regulation also mandates ongoing risk management, bias monitoring, and human oversight, including the ability to override automated decisions.

Most provisions come into force in August 2026, requiring insurers to establish formal governance frameworks and compliance processes to avoid regulatory penalties.

Model interpretability

Explainable AI (XAI) is not merely a compliance requirement; it is a tool for model debugging and trust-building. If a pricing model starts assigning high risk based on a nonsensical feature—a phenomenon known as "data drift" or "overfitting"—XAI helps data scientists identify and correct the error before it impacts the bottom line.

It’s worth noting that many high-performing models are difficult to interpret. This creates a need for additional explainability techniques, which complicates model design and deployment.

Fairness and bias mitigation

Underwriting models must be monitored for bias, as decisions can directly affect individuals. Insurers apply mitigation techniques at different stages: adjusting training data to reduce bias, adding fairness constraints during model training, or calibrating outputs to meet fairness criteria. The models should be regularly tested for disparate impact (e.g., differences in approval or pricing across different gender, age, or race groups). If issues are detected, models should be adjusted—for example, by removing proxy features or retraining with updated constraints.

Model validation and monitoring

Underwriting models require continuous validation before and after deployment. Initial testing uses standard metrics (e.g., accuracy, calibration) and hold-out datasets. In production, insurers monitor performance, detect data drift, and run periodic bias checks. All decisions are logged for auditability, including model version and inputs. Many insurers also use “champion–challenger” setups, where new models are tested in parallel and replace existing ones if they perform better.

Olga is a tech journalist at AltexSoft, specializing in travel technologies. With over 25 years of experience in journalism, she began her career writing travel articles for glossy magazines before advancing to editor-in-chief of a specialized media outlet focused on science and travel. Her diverse background also includes roles as a QA specialist and tech writer.

Want to write an article for our blog? Read our requirements and guidelines to become a contributor.