Bias in machine learning

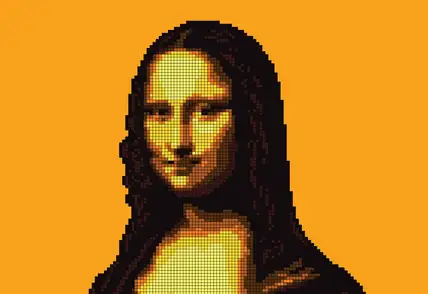

Bias in machine learning refers to systematic errors that cause a model to produce skewed or unfair results. It happens when a model consistently favors certain outcomes, groups, or patterns over others, not because of real-world differences, but due to how it was designed, trained, or evaluated.

Common types of bias include

- data bias, where training data is incomplete, unbalanced, or unrepresentative;

- historical bias, where past social or institutional inequalities are reflected in the data;

- algorithmic bias, introduced through model design or optimization choices; and

- human bias, including unconscious assumptions made during data collection, labeling, or evaluation.

Bias can have serious impacts, especially in areas like hiring, lending, healthcare, and law enforcement, where unfair predictions may affect people’s lives. For example, if a CV screening system is trained on data where most past hires were male, the model may learn to favor male candidates and rank female applicants lower, not because they are less qualified, but because of an imbalance in the training data.

Explainable AI (XAI) plays an important role in identifying and reducing bias. By making model decisions easier to understand, XAI helps practitioners see which features or data points influence outcomes, making hidden biases more visible.