When we built Interlace, our code knowledge graph platform, we deliberately chose to skip the graphical interface entirely and build an MCP server instead, one that coding assistants could connect to directly. For a tool whose entire purpose is to give AI agents structured, persistent knowledge about a codebase, putting a dashboard in the middle would have been the wrong abstraction. The agents are the interface.

In this piece, we walk through that reasoning and share what we learned along the way from Ismail, the engineer who implemented the MCP server, including a few things he wishes he had known before starting.

How often legacy systems should be rewritten, and various approaches

What is Interlace?

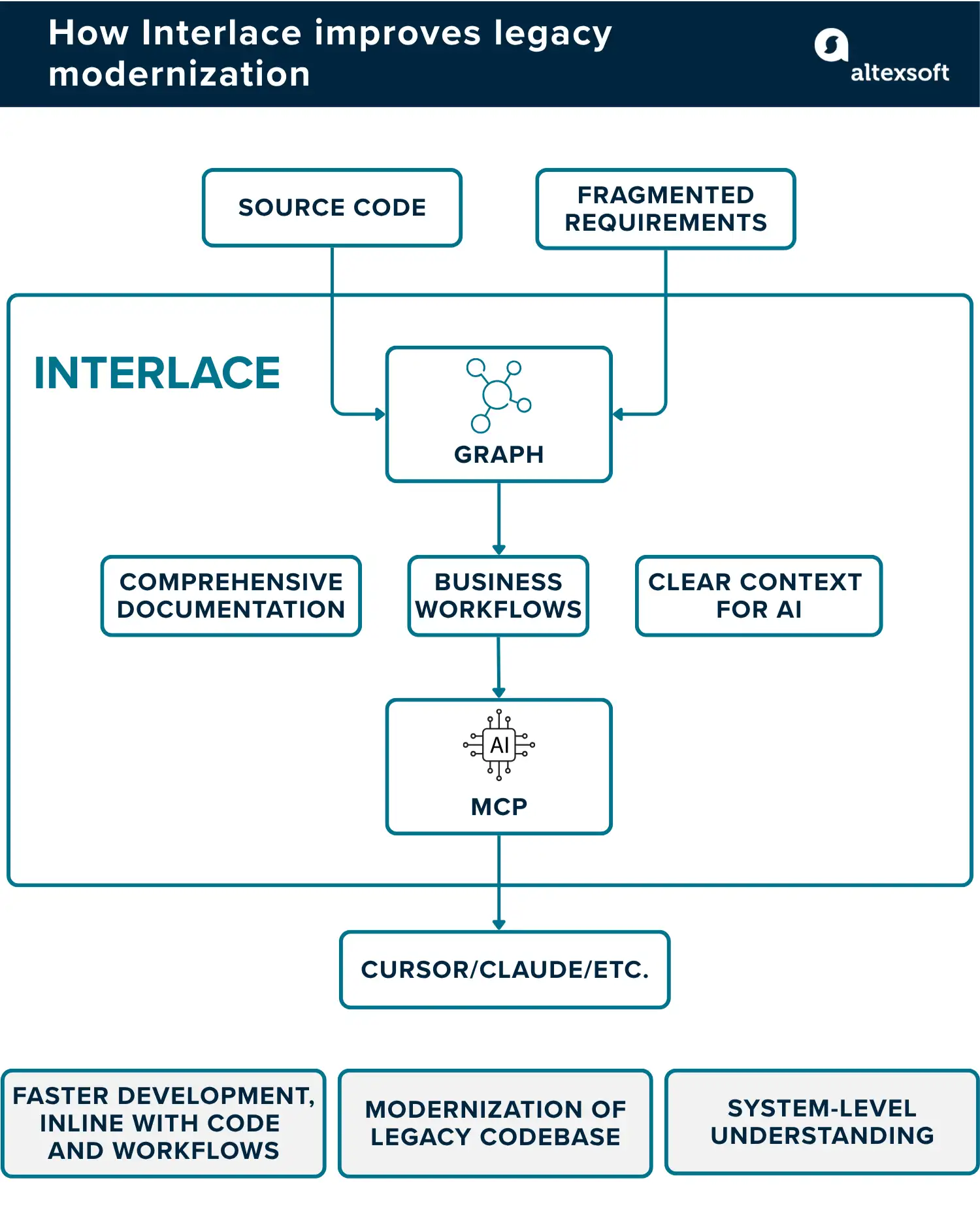

Before AI coding tools like Cursor and Claude Code can be truly useful on a complex legacy codebase, they need to understand it, not just file by file, but as a living, interconnected system. That's the problem Interlace, a code knowledge graph platform developed by AltexSoft, was built to solve.

Interlace performs automated reverse engineering of software systems and stores the discovered knowledge as a persistent, queryable knowledge graph in FalkorDB—which AI agents can access via an MCP server. Where tools like Cursor operate within a single session's context window and reset when that window closes, Interlace builds a durable, system-wide understanding of a codebase that persists across sessions, teams, and tools.

The platform runs a structured nine-phase analysis pipeline, powered by the Anthropic API, that takes a codebase from raw source files all the way to business-level understanding: structural discovery, endpoint and entity extraction, dependency mapping, service grouping, user flow reconstruction, and auto-generated documentation. Everything ends up in a graph where every function is placed in context, showing which user flow it belongs to, what services it depends on, and what might break if it changes.

Why an MCP server and not a GUI?

A major consideration we had when building Interlace was what the interaction layer would be like. The go-to answer for most software products is a visual interface—typically a dashboard—where engineers can explore nodes, filter relationships, and trace dependencies.

We took a different direction and decided to expose it strictly as an MCP server. Ismail Aslan, a Machine Learning Engineer who was involved in the development, explains why: “In a standard dashboard, there is no reasoning or LLM capability. You have filters, and you click through elements, but you can’t query the system and get a contextual response.”

A dashboard surfaces information and leaves the interpretation to the human. On the other hand, an MCP connection puts the graph behind a model that can reason over what it finds, which creates a fundamentally different experience.

“The LLM reasoning plus the connection with the graph database means the agent can retrieve structural information about the codebase and reason over it in context. That kind of capability is distinct from a standard dashboard,” Ismail says.

Beyond the reasoning capability, we also decided to focus on an MCP server because we considered where developer workflows actually live. Engineers working with Interlace are already inside coding tools like Claude Code and Cursor and sending them to a separate interface to look something up and bring the answer back manually would have introduced exactly the kind of friction Interlace is designed to remove. By connecting the graph Interlace produced directly to an LLM, developers never have to leave their environment.

The MCP server approach is also a solid bet as far as future-proofing and long-term capability are concerned. MCP is a protocol rather than a custom integration, so Interlace’s MCP server works with any client that supports it. As new AI coding tools emerge, the server is compatible with them by default. A bespoke dashboard would need to be updated and maintained independently every time the ecosystem shifted.

What we learned building Interlace's MCP server

There are various lessons we learned while building Interlace’s MCP server, especially with it being a relatively new technological approach. Here are the most important ones.

The real user is the LLM, so design for it

The most important thing to get right before writing a single line of code is understanding who your MCP tools are actually built for. Some developers instinctively build for themselves, but as Ismail puts it, “With MCP, the primary user is the LLM or agent, not the human.”

In practice, this is the workflow.

- The developer asks a question inside an MCP client.

- The LLM formats the question and calls the relevant MCP tool.

- The tool queries the Interlace knowledge graph and returns the result.

- The LLM reasons over that result and delivers a response in context.

Knowing that LLMs are the “true” audience of MCP servers means you have to create a different set of considerations. One area this affects is how many tools you expose. The model has to decide on its own which tool fits the task, and the more options you give it, the more room there is for it to pick the wrong one or burn through context unnecessarily.

“When you start giving a lot of different tools to your model, it starts getting confused,” Ismail explains. “You have to be careful what tools you are giving it, because context size is one of the main problems here.” Fewer, well-scoped tools from your APIs will serve you better than a large, loosely defined set.

Part of the reason for this is that every tool you define comes with a description, and every description takes up space in the context window. So the problem compounds: More tools mean more descriptions, which means less room for the actual work the agent is trying to do.

A model working through a complex codebase question that also has to hold fifty tool descriptions in context is not going to perform as well as one that only has to reason over the ten tools it will realistically need. The practical answer is to design tools around what the agent is trying to accomplish rather than mirroring your internal API structure directly.

Tool descriptions are closer to prompts than documentation

One of the things you will have to do when defining the tools your MCP server exposes is write descriptions for each of them. The tendency is to treat this the way you would a code comment, a quick line explaining what the function does, written for another developer who might read it later. That instinct is understandable, but it is the wrong one here.

The description field, just like the tool itself, is not for the developer. “Tool descriptions are what models read when they decide whether to use a tool and how to use it, and if the descriptions are vague or overlap with another tool’s, the model will make the wrong call,” says Ismail. The descriptions you create should be like detailed prompts.

It also helps to be specific about edge cases. If a tool should not be used for a certain type of query, say so.

Context window management is a structural concern, not an afterthought

Knowledge graphs can return a lot of data, which becomes an issue early in the building process. The MCP server’s results were so large that they would saturate a model's context window.

We solved this by limiting what is returned by default, applying pagination so the model can request more when needed, and summarizing results instead of dumping all the raw data.

This is worth thinking about before you ship. Claude Code, for instance, shows a warning when any MCP tool output crosses 10,000 tokens, with a hard cap at 25,000, after which tool calls will fail. If your tools can return unlimited results, that will eventually happen in production, and you may not know until a real user runs into it.

Test with the actual MCP clients that your users work with

Claude Code and Cursor do not behave the same way as MCP clients. A server that works fine in one environment can break in the other, because of how each client handles things like tool call sequencing, error responses, and configuration.

Cursor, for example, will ask for parameter values when a prompt needs them. Claude Code, on the other hand, expects you to have written the command in exactly the right format from the start, and if you haven't, it either fails silently or does nothing useful.

You will not find these things by testing against a generic MCP setup. You have to test against the actual tools your users are working with.

With a software engineering background, Nefe demystifies technology-specific topics—such as web development, cloud computing, and data science—for readers of all levels.

Want to write an article for our blog? Read our requirements and guidelines to become a contributor.