Many organizations reach a point where their software becomes difficult to fully understand. It’s not just about age. More often, it stems from accumulated changes, developers moving on, outdated or missing documentation, and evolving requirements that gradually obscure the original design.

To navigate this complexity, IT teams map their codebases—creating structured models that show how a system is organized and how its parts relate. In this article, we explore the main approaches to codebase mapping, how the practice has evolved, and how agentic AI solutions such as Interlace—AltexSoft’s code knowledge graph platform—are expanding what’s possible.

Read our article on AI agents to learn more about them and how they work in detail.

What is codebase mapping?

Codebase mapping is the process of building a structured, queryable model of how a software system is organized—its components, dependencies, data flows, and the logic that connects them.

While code documentation is helpful, mapping goes further. It gives you a standalone artifact that contains the context needed to understand how the system fits together as a whole, not just what individual parts do in isolation.

A useful map answers questions that the code itself cannot explain quickly, such as

- Which services depend on each other, and how tightly?

- Which database tables are touched by specific API endpoints, and how?

- How does a user journey like checkout actually flow through multiple services?

- Which parts of a monolith are safe to extract as microservices, and in what order?

Code on its own is a poor record of the reasoning behind it. Mapping bridges the gap between raw source and human understanding. It becomes a source of truth you can rely on to make grounded decisions—whether you’re making updates, onboarding new team members, or modernizing the system.

Types of codebase maps

Code maps come in several forms, each suited to different software systems and scales of complexity.

Hierarchical maps

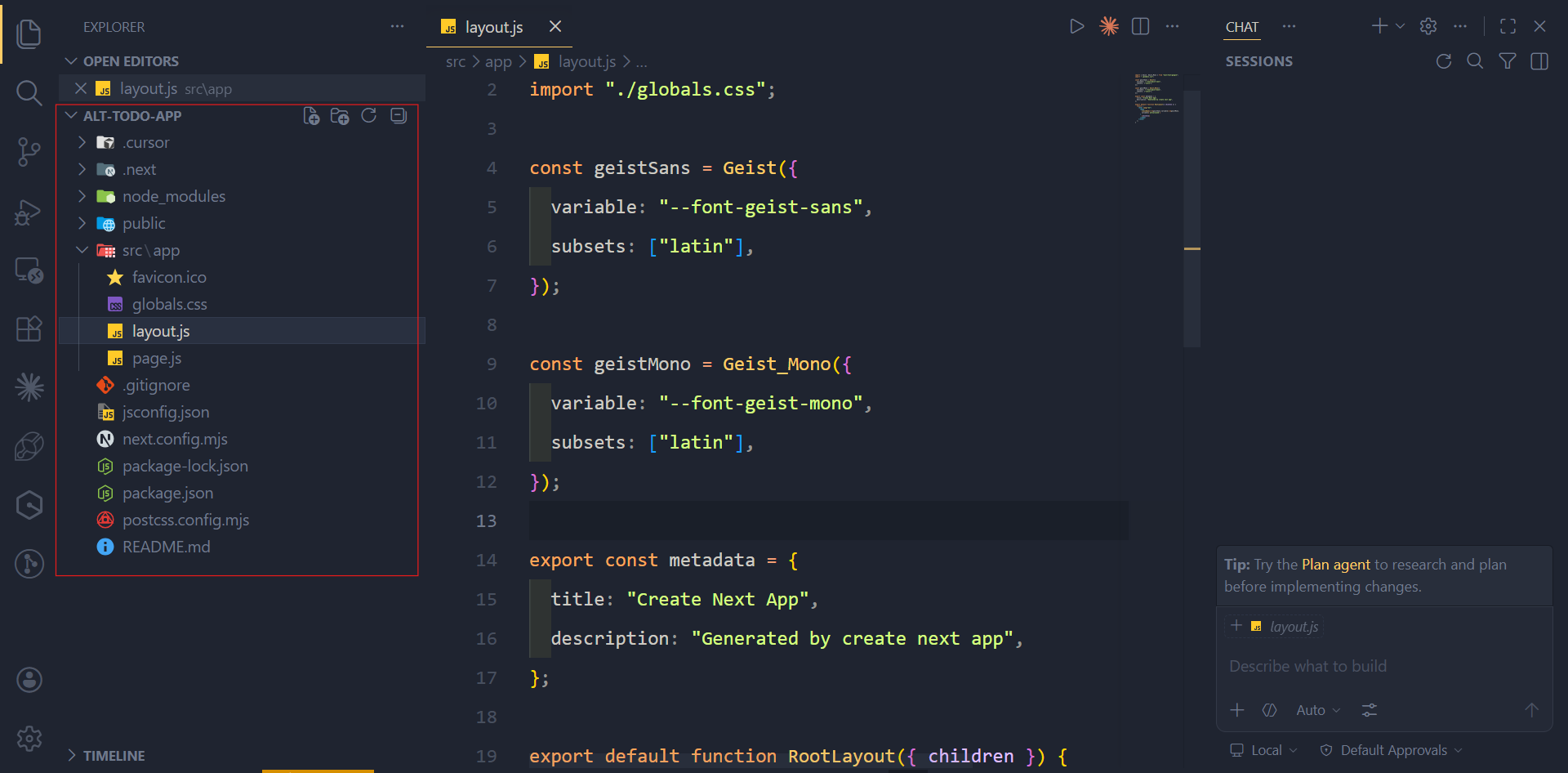

Hierarchical maps organize a codebase by its folder and module structure. They are the starting point most engineers default to when approaching an unfamiliar system, and for good reason. Before you can ask meaningful questions about how a system works, you need a basic orientation, such as which languages are in use, how the team has grouped files, and roughly where different concerns live.

You can build this view directly from the directory tree. Most IDEs and code editors render it automatically, and tools like the tree command on Linux or the file explorer in VS Code and Cursor provide the same structure.

Use case. Getting an initial foothold in a completely unfamiliar codebase before moving to deeper analysis.

Pros. No additional tooling or processing required. It gives new engineers a quick, low-effort overview before diving into the code itself.

Cons. It reveals little about how parts of the code interact, which is usually what matters most. Files in the same folder may be unrelated, while files in different directories can be tightly coupled.

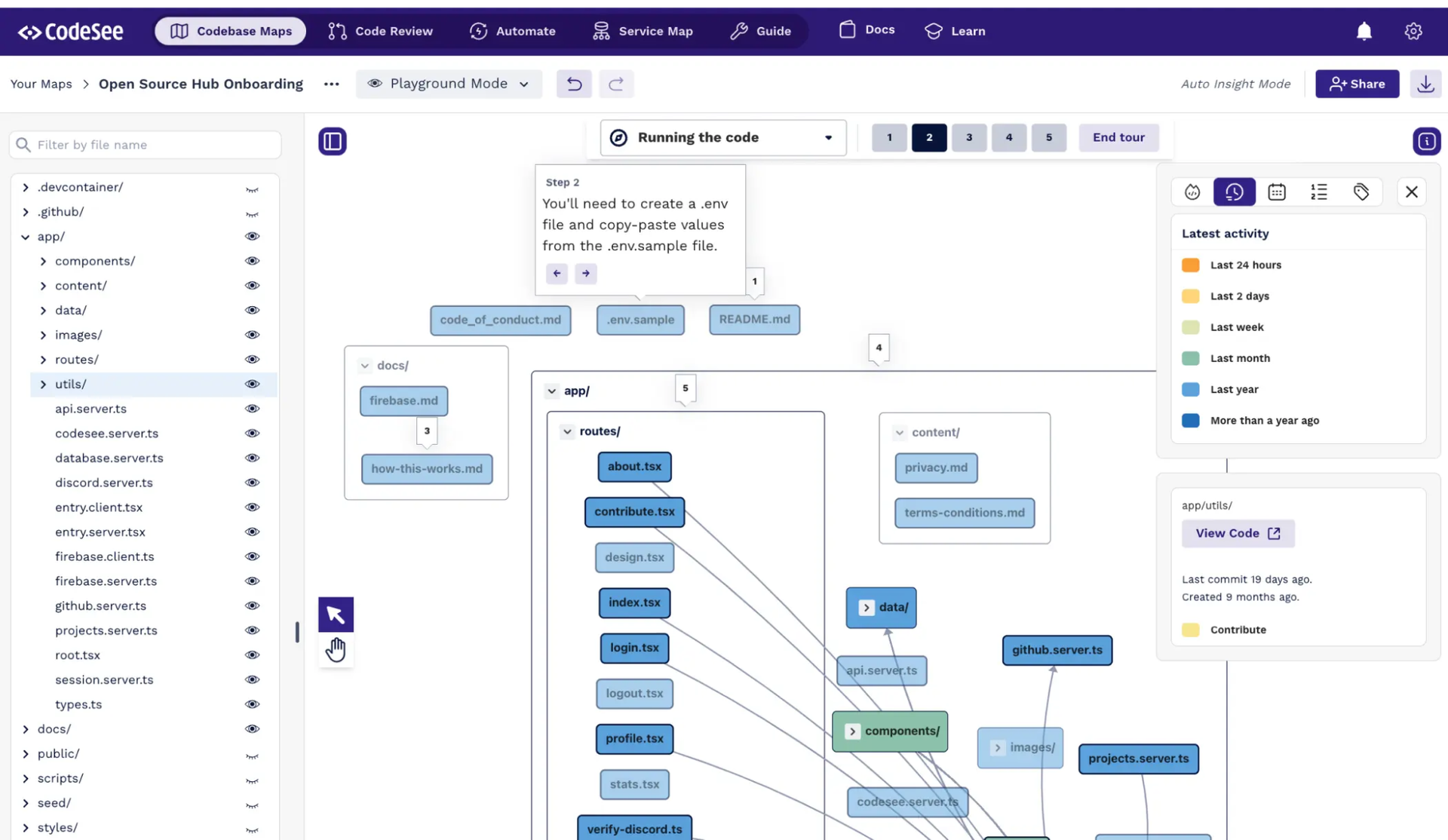

Dependency maps

Dependency maps trace connections between components such as modules, packages, services, and libraries. They’re typically generated using code analysis tools that examine the codebase—analyzing import statements, function calls, and interface boundaries—to produce a visual graph of how components depend on one another. While most rely on static analysis, some incorporate runtime data for more accurate insights.

Most major programming languages have mature tooling for generating dependency graphs—for example, Madge for JavaScript/TypeScript and PyDeps for Python.

Use case. Planning changes to shared components, identifying which parts of a system are safe to modify independently, and understanding how tightly coupled different areas of the codebase are before a migration.

Pros. The output is deterministic: The same codebase will always produce the same map.

Cons. Dependency maps show structure, not behavior. Knowing that Service A calls Service B doesn’t reveal what data gets passed, under what conditions, or why that relationship exists in the first place.

Flow-based maps

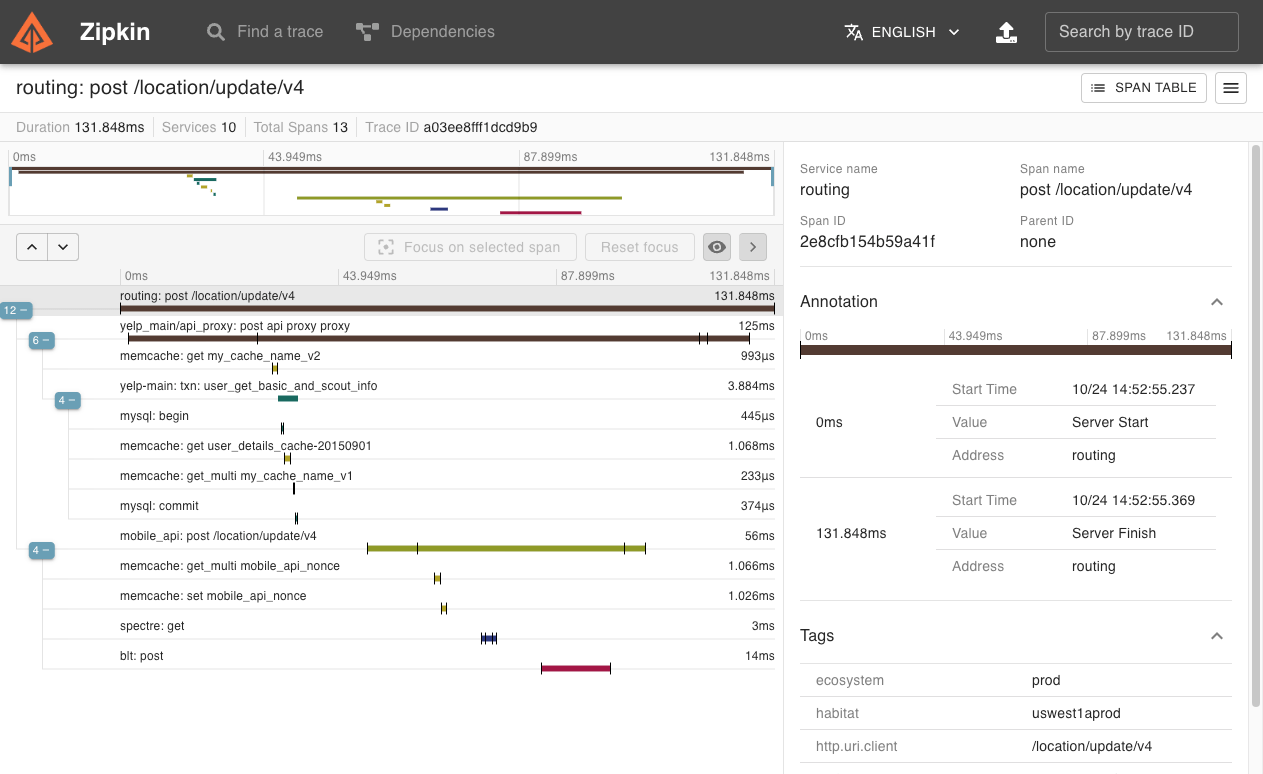

Flow-based maps trace how data actually moves through a system during execution. While a system may include dozens of services, functions, and integrations, only certain sequences are activated at runtime depending on user actions or triggered events. For example, when a user places an order, the request may flow through authentication and payment services, while reading from an inventory table and writing to an orders table.

Flow-based maps capture these execution paths, recording the order in which operations occur and how long each step takes. They reflect how a system behaves in practice—not just how it was designed.

You can build flow-based maps in several ways.

Runtime instrumentation. Tools like Zipkin or Datadog APM attach to a running system and record request traces as they happen. This is the most accurate way to capture real execution paths.

Log and event analysis. By parsing application logs and event streams, you can reconstruct the sequence and timing of operations in a running—or recently executed—system. This approach is useful when tracing is not fully implemented.

Manual reconstruction. You can also infer flows by reading request handlers, middleware chains, and event-processing logic. While less precise and harder to scale, this method helps fill gaps when runtime data is incomplete or unavailable.

A trace showing a location update request flowing through 10 services. Source: Zipkin

Use case. Debugging production issues where the symptoms appear in one service but the cause is several steps upstream. It's also helpful for compliance work where you need to document what happens to sensitive data as it moves through a system.

Pros. Flow-based maps show you what actually happens at runtime, allowing you to uncover insights that you'd miss if you were only reading the code, such as unexpected service interactions, timing bottlenecks, or paths that get triggered in production but were never formally documented.

Cons. Flow-based maps only capture what actually runs during the observation window, meaning rarely triggered paths or edge case flows may never appear, giving you an incomplete picture of how the system truly behaves. Also, getting clean, reliable traces requires instrumentation to be properly configured in every service, which takes effort, especially when working with a large or legacy codebase.

Architecture maps

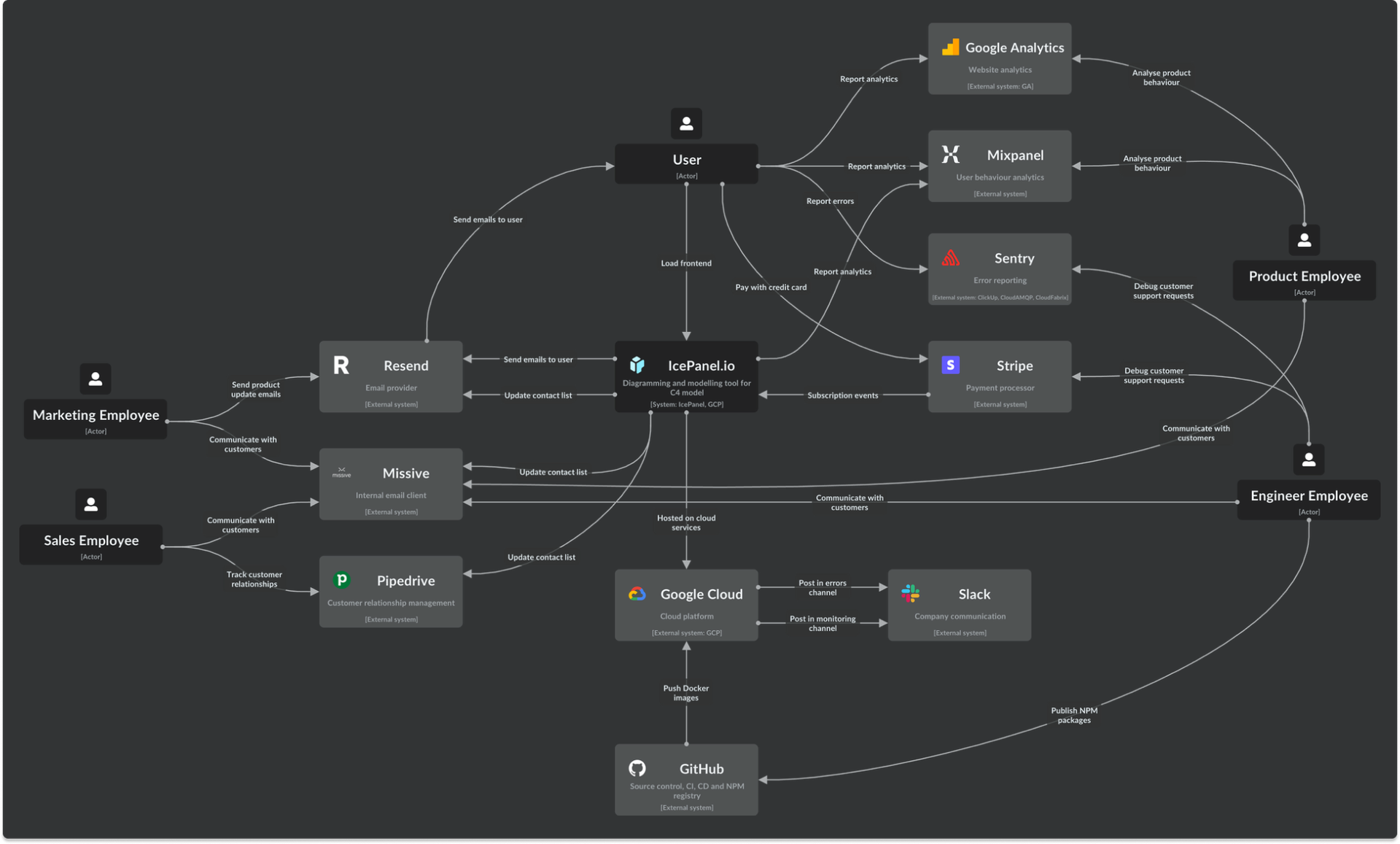

Architecture maps abstract away individual files and data flows, grouping components into higher-level domains—such as payments, user management, notifications, or catalog—and showing how those domains relate to each other.

They are typically created through a combination of workshops, interviews with engineers familiar with the system, and manual review of the codebase. AI-assisted tools can help generate an initial draft of these groupings directly from the code, but human judgment is still essential to validate and refine the result.

A system context map, showing how the product connects to external services. Source: IcePanel

Use case. Planning how to break up a monolith and guiding large-scale migrations.

Pros. Architecture maps effectively communicate how a complex system is organized. By focusing on domains and responsibilities rather than files and functions, they make the structure understandable for both engineers and non-technical stakeholders.

Cons. They always require manual input. Even when AI tools generate an initial draft, human judgment is needed to validate and refine it. Architecture maps also depend on ongoing maintenance—without it, they can quickly become outdated as the system evolves.

Graph-based maps

Graph-based maps represent a codebase as a network of nodes and edges. To build one, you parse the codebase to extract components and relationships, then store them in a graph database such as Neo4j or FalkorDB.

What sets this approach apart is that a single graph can unify multiple types of relationships within one model. Instead of switching between separate maps to understand dependencies, flows, or domains, you can query across all of them at once. For example, you can ask which endpoints write to the payments table, which services depend on those endpoints, and which user flows pass through those services.

This also makes graph-based maps particularly well-suited for AI agents. By traversing relationships in the graph, an agent can answer complex, multi-step questions about the system, rather than relying solely on raw code. This grounded, structured context helps reduce the risk of incorrect assumptions that can arise when reasoning over large codebases.

Depiction of a graph database

Use case. Legacy modernization programs, architecture reconstruction in poorly documented systems, and any scenarios where AI agents assist with development or analysis.

Pros. A single graph can unify what dependency, flow-based, and architecture maps capture separately. This makes it a powerful foundation for both human analysis and AI-assisted workflows.

Cons. Graph-based maps can be expensive and complex to build. It requires substantial upfront analysis, and if the underlying data is incomplete or inaccurate, the resulting graph may provide limited value.

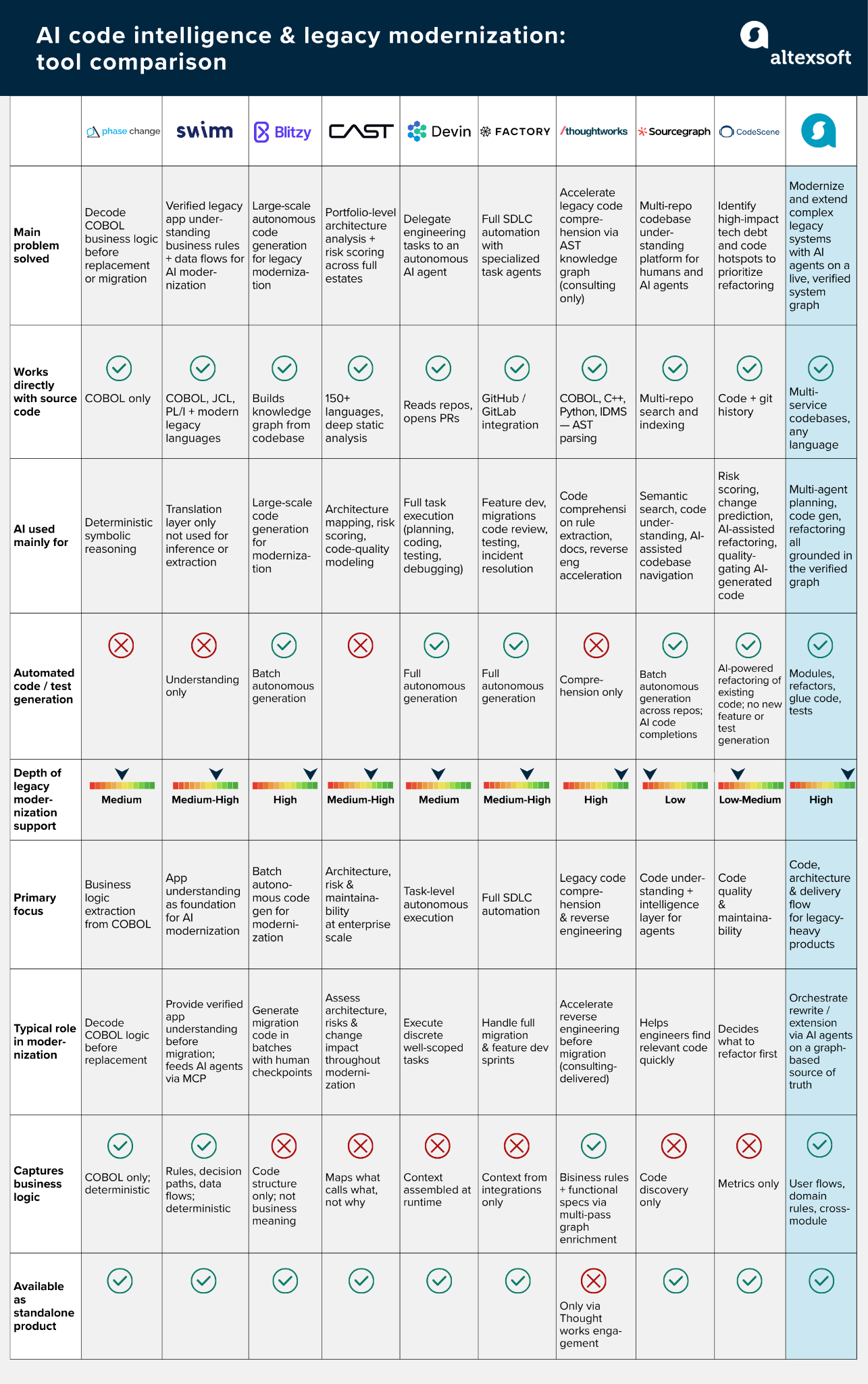

Agentic codebase mapping tools

The market for agentic codebase mapping is still emerging, and most tools approach the problem from adjacent angles rather than providing a unified map of the system.

Explore our datasheet for a thorough overview of codebase mapping and legacy modernization tools.

Some focus on code navigation and search. Platforms like Sourcegraph and Augment Code index large codebases and make them queryable. Under the hood, they build deep semantic representations of dependencies, API contracts, and code history. However, this structure is not exposed as a navigable map. Instead, it is used internally by AI agents to power context-aware search, chat, and automated refactoring.

Others help reconstruct logic in legacy systems. Phase Change Software analyzes legacy codebases, including COBOL, to extract business rules and map them to understandable functions. Thoughtworks’ CodeConcise follows a similar approach, parsing code into an abstract syntax tree (AST) and loading those relationships into a structured knowledge graph. Both tools allow systems to be explored programmatically and accelerate reverse engineering ahead of modernization.

Some solutions operate at the documentation and understanding layer. Swimm began as a platform for walkthrough documentation, linking code directly to continuously updated explanations. More recently, it has expanded into system understanding, using a combination of deterministic analysis and AI to extract business logic, track data flows, and surface cross-system dependencies. This brings it closer to structural mapping, though its core strength remains in maintaining context and documentation over time.

Taken together, existing tools reflect a fragmented landscape. Some help you navigate code, others reconstruct parts of it, and others document it—but few provide a single, unified model that captures structure, behavior, and architecture in one place. That “full-system map” remains an open problem—and an active area of innovation.

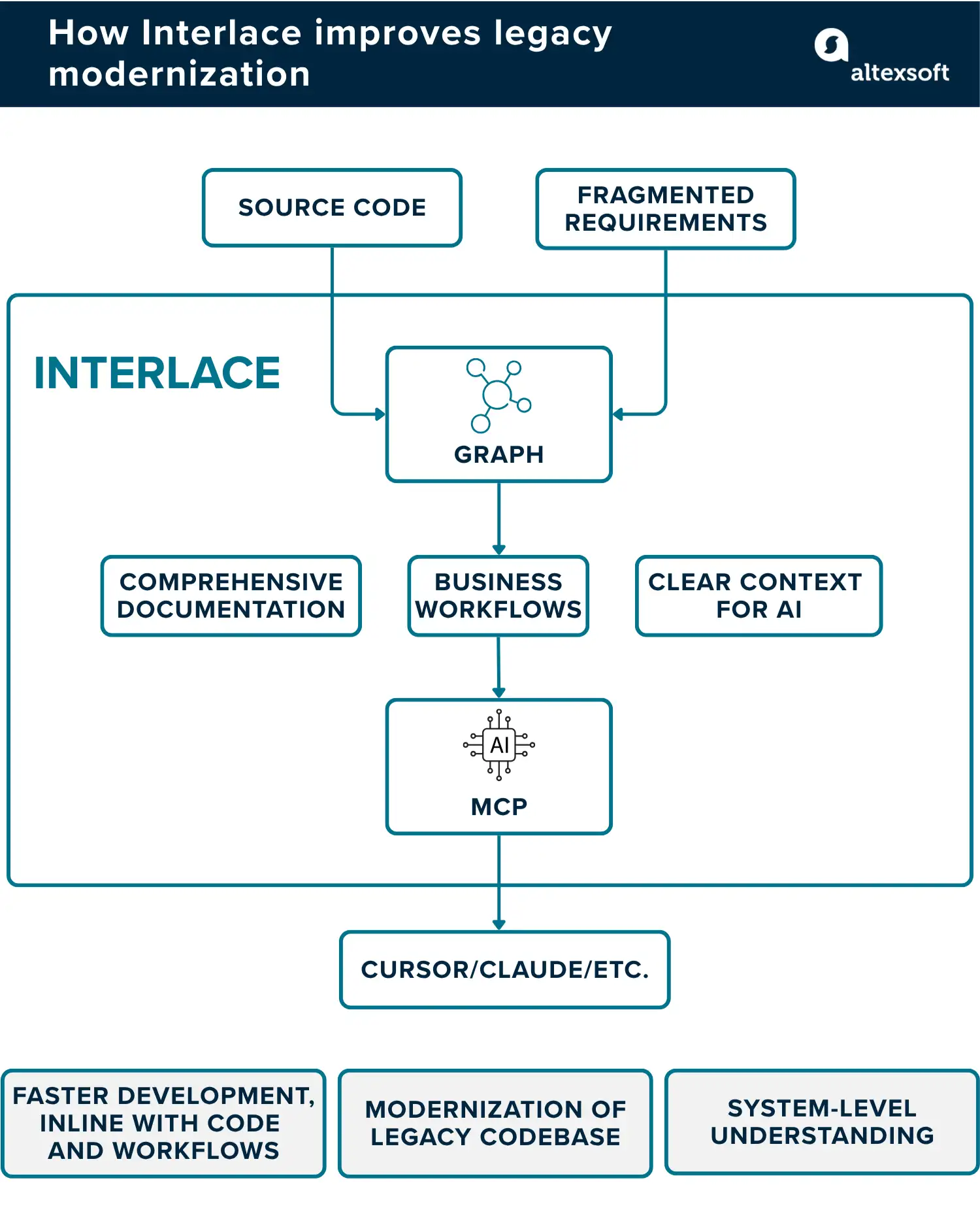

How we use graph-based codebase mapping

Interlace is AltexSoft's take on graph-based codebase mapping. The platform is built specifically for large, complex, and legacy-heavy systems, with the idea that a durable, persistent model of the codebase—one that captures how components relate to each other across sessions—is essential for reliable AI-assisted development and modernization.

Building that model happens through six steps: structural discovery, smart file sampling, technology and endpoint detection, entity and dependency mapping, user flow reconstruction assisted with human-in-the-loop validation, and documentation generation. Each step builds on the last, moving from basic facts about how the codebase is organized to a complete picture of how the system behaves and what it does for users.

The output is stored in FalkorDB as a queryable knowledge graph and exposed via the Model Context Protocol, making it directly accessible to AI agents during active development.

Once the graph is built, engineering teams have a fundamentally different starting point for working with a complex codebase. Here’s what that looks like in practice in all key areas.

Codebase exploration

The graph renders as an interactive map where every service, endpoint, entity, component, and user flow appears as a node. Nodes are color-coded by type so you can tell at a glance what you are looking at. The biggest nodes are the ones with the most connections to other parts of the system, so the components that everything else depends on are obvious at a glance.

Search works semantically, not just by name. Every node has a description that is indexed for meaning, so you can search “payment processing logic” and find the relevant parts of the system even if the code uses completely different variable names. In legacy systems where function names like processData() or handleRequest() tell you nothing, this matters a lot.

Dependency mapping

Dependency mapping is split into three layers, each answering a different question.

The first layer maps endpoints to data. Which endpoints read from which tables? Which ones write? This goes deeper than just ORM calls and traces raw SQL queries and stored procedure calls too, because legacy systems rarely use ORM consistently. The same database table might be accessed six different ways across a service, some of them obvious and some buried several function calls deep.

The second layer maps services to services. Which services call each other, either through direct HTTP calls or through shared data? Two teams might spend months building what they believe are independent modules, only for this layer to reveal that they have both been writing to the same database table in conflicting ways.

The third layer maps the frontend to the backend. Which UI components call which endpoints? This gives you an end-to-end trace from what a user clicks all the way to what hits the database.

All three layers are queryable. You can ask things like “show me every endpoint that writes to the User table” before touching a schema, or “which services have nothing depending on them” to find safe candidates to extract first.

Data flow tracing

Data flow tracing is where the gap between what a team thinks the system does and how it actually works tends to be most evident. On one project, a five-year-old Node.js monolith with 847 files, the analysis picked 67 files as the ones most likely to reveal how the system was actually organized.

From those 67 files, Interlace found 143 endpoints and 31 distinct entities, several of which the current team had no idea were still active.

On that same project, a client's team planned to extract a payments service, which they thought touched five data entities. However, Interlace's analysis uncovered 11, three of which were shared with user management and notification services, creating dependencies that would have caused serious problems mid-extraction.

Architecture reconstruction

In most legacy systems, the original architecture diagrams are outdated, and the folder structure reflects decisions made years ago rather than how things actually work today.

Interlace reconstructs the architecture by analyzing which endpoints share data and which functions have related concerns, grouping them into logical services based on actual behavior rather than how the code is physically organized.

On the same Node.js monolith project, this analysis revealed something the team had never seen before: FinancialAccount—a database entity that handled platform-wide core financial data—was the most connected entity in the entire system, with three separate domains depending on it. The team had planned to extract payments first, but doing so would have caused cascading failures with everything tied to FinancialAccount mid-migration.

Based on the dependency data, the recommended sequence was to extract FinancialAccount as a shared internal service first, then payments. This new direction, which was driven by the actual connections revealed by the analysis, reduced the risk of disruptions during the migration.

Security findings in context

Interlace integrates Semgrep and SonarQube as part of the analysis pipeline, which means that security findings and architecture discoveries end up in the same place. If an endpoint gets flagged for a potential SQL injection vulnerability, that flag is attached directly to the endpoint entry in the graph, alongside everything else already known about it.

So instead of running a security scan, getting a separate report, and then manually figuring out which parts of your architecture those findings relate to, you can ask, “Show me high-risk endpoints that also touch the payments data.”

Maintaining your codebase map

Maintaining your codebase map is just as important as creating it. Maps go stale for the same reason documentation does: The codebase keeps evolving while the map stays static.

Here are a few practices to keep your map accurate and useful over time.

Hook updates into your existing workflow. The best place to capture changes is wherever code is already reviewed—pull requests, deployment pipelines, or sprint reviews. If updating the map requires a separate process, it won’t happen consistently.

Automate what you can. Static analysis and dependency extraction can run on a schedule or trigger on new commits. Let tools handle routine updates and reserve human input for decisions that require judgment—such as redefining domain boundaries or reclassifying components whose roles have changed.

Rerun analysis before major decisions. Before a migration or large refactor, regenerate your map. Even a few months of active development can introduce enough new dependencies to change where you should start.

With a software engineering background, Nefe demystifies technology-specific topics—such as web development, cloud computing, and data science—for readers of all levels.

Want to write an article for our blog? Read our requirements and guidelines to become a contributor.