If your company is still running software that was built 10, 15, or 20 years ago, you're not alone. Many organizations, commonly those in banking and healthcare, depend on systems that were cutting-edge at the time but have, over time, become slow, expensive to maintain, and difficult to integrate with modern tools.

To combat this, more companies are modernizing their legacy systems. However, this isn't a simple “rip and replace” job. It requires thorough planning and a strategy that fits your business.

This guide walks you through different legacy modernization approaches available, how to execute a project step by step, and how AI can improve the modernization journey.

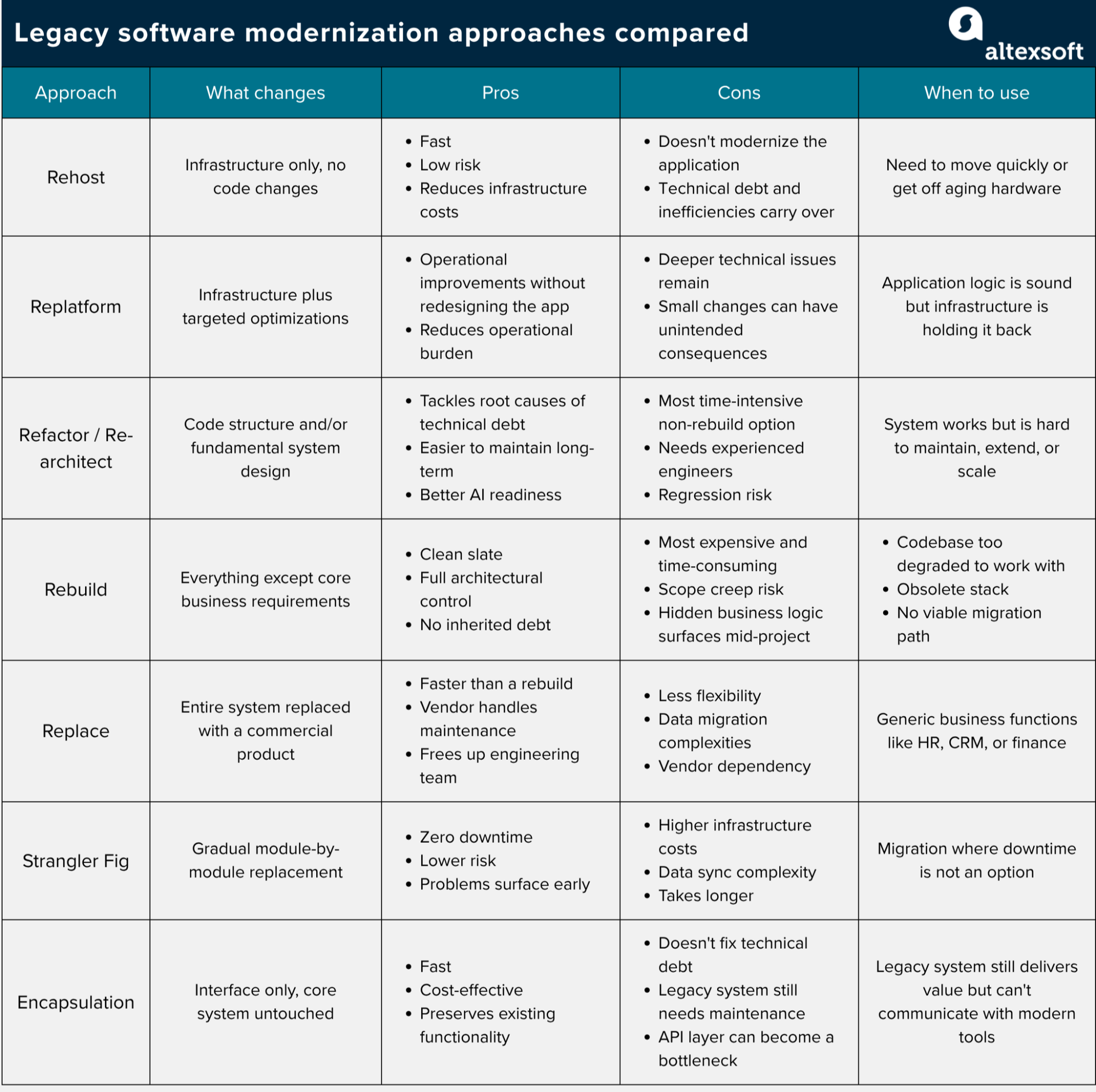

Main legacy software modernization approaches

No single modernization approach can meet the needs of all businesses, because factors like system complexity, budget, risk tolerance, and how fast results are needed differ.

There are various modernization approaches, with Gartner's “5 Rs” framework being one of the most widely used. Let’s explore it along with other methods.

1. Rehost (lift and shift)

You move the application to a new infrastructure, usually cloud, without changing the code or architecture. Everything stays the same except where it runs. Think of it like moving to a new house—the furniture and other essentials remain the same, but you're now in a different building.

Below are the steps to follow when implementing the rehosting approach.

- Assess your applications because not everything is a good candidate for a straight lift. For example, an application with dozens of hardcoded dependencies and integrations will likely cause problems if moved without any changes, while rehosting internal tools with minimal integrations and traffic is more straightforward.

- Choose your target infrastructure where the application will run after the move. Cloud providers are the common choice for most organizations. However, depending on your security or regulatory constraints, a private cloud or a different on-premises data center may be the more appropriate destination.

- Back everything up, including code, databases, configuration files, and environment variables, so you can recreate the system if something goes wrong.

- Set up the target infrastructure by provisioning virtual machines, configuring networking, and setting up security policies and role-based access controls.

- Migrate workloads using automated migration tools where available. If you're moving to a public cloud provider, tools like AWS Migration Hub can speed up the transfer and reduce errors. If you're moving to a private cloud or a different on-premises data center, the approach will depend on your setup.

- Test thoroughly to confirm that APIs, user workflows, database queries, authentication flows, and data outputs to confirm that the application behaves the same way they did before the move.

Pros

- Fast to execute with minimal disruption to the application and the teams that depend on it

- Low risk since no code changes are involved

- Reduces infrastructure costs and hardware dependency relatively quickly

Cons

- Doesn't modernize the application itself, so technical debt, performance inefficiencies, and underlying problems all carry over to the new infrastructure.

When to use. Rehosting works well as a low-risk first step before committing to a deeper transformation, particularly when you need to move quickly or get off aging hardware without disrupting the application.

2. Replatform (lift, tinker, and shift)

Replatforming is similar to rehosting in that you still move the application to a new infrastructure—that’s the “lift and shift” part. The only difference is “tinker,” because with replatforming, you use the opportunity to make minimal but critical adjustments that allow you to make the best of the new platform, all without redesigning the application’s core logic.

These are the steps to follow when replatforming.

- Identify exactly what you're changing before writing a single line of code, whether that's the database, runtime, or middleware, and keep everything else fixed.

- Decide how much tinkering makes sense given your timeline and goals. If you're under time pressure, keep the changes minimal and optimize later. If you have more flexibility, use the migration as an opportunity to make more meaningful improvements that will reduce costs or improve performance down the line.

- Set up the target infrastructure by building the new platform configuration alongside the existing one before migrating anything.

- Execute the migration—it's always recommended to do so in stages rather than moving everything at once.

- Perform integration and regression tests after you move each component before proceeding to the next one.

- Decommission the old components once the new setup is stable and validated, and retire what you replaced.

Pros

- Delivers operational improvements without the cost and risk of redesigning the application.

- Faster than refactoring while still offering more value than a straight rehost.

- Managed services reduce the operational burden on your team.

Cons

- The core application logic remains unchanged, so deeper technical issues aren't addressed.

- Requires careful scoping since changes that seem small can have unintended consequences if boundaries aren't clearly defined.

When to use. Replatforming works well when the application logic is sound, but the current infrastructure is expensive or affecting performance, and a full refactor is more than your timeline or budget can support right now.

3. Refactor (Re-architect)

Refactoring and re-architecting are sometimes treated as separate strategies and sometimes grouped together depending on who you ask. What they have in common is that both go deeper than a rehost or replatform.

Refactoring involves improving the internal structure of an existing system without changing its behavior. It involves tasks like cleaning up messy, hard-to-read code, removing duplicate logic, and breaking large functions into smaller, more manageable ones.

Re-architecting goes further and changes the fundamental design of the application. This could mean breaking a monolith into microservices, moving from a request-based system to an event-driven architecture.

Follow these steps to implement refactoring and/or re-architecting.

- Map the existing architecture before touching anything, so you can understand what the system does, how it's structured, and where the dependencies lie.

- Build a test suite before you touch anything because if the legacy system has no automated tests, you have no way of knowing whether your changes have broken something.

- Refactor incrementally rather than trying to change everything at once, because large sweeping changes are harder to test or reverse.

- If you're also re-architecting, update your CI/CD pipeline because the new system structure will likely require a different deployment process.

- Test after every change because if untested changes accumulate, it becomes hard to trace the root cause if something breaks.

Pros

- Addresses the root causes of technical debt rather than working around them.

- Makes the system significantly easier to maintain and extend over time.

- Positions the application well for future modernization and AI readiness.

Cons

- The most time-intensive and expensive of the non-rebuild options.

- Requires experienced engineers who understand both the old system and the new architecture.

- High risk of regressions if the test suite is inadequate going in.

When to use. Refactoring is the right choice when the system works but has become difficult to maintain or extend, and is commonly used to address technical debt. Layer on re-architecting when your current structure can no longer support the scale, speed, or new capabilities the business needs.

4. Rebuild

With the rebuild method, you write the application from scratch using modern technologies while keeping the same business functionality. It's the most expensive and time-consuming approach because it's a fresh start where you don’t salvage or reuse any existing code.

These are the steps to take when rebuilding software.

- Document the existing system's behavior before writing a single line of new code.

- If you plan to use this opportunity to make some upgrades, agree on what the new system needs to do before the build starts in order to avoid scope creep.

- Choose the future-proof technology stack and infrastructure that matches your business needs and long-term plans.

- Plan for parallel running since even with a full rebuild, you'll likely need both systems live for a period. One practical approach is to have the new system handle all new activity while the old one manages existing records.

- .Build and release incrementally rather than waiting until the entire system is built before releasing anything. Deliver in chunks, validate each piece against the original system's behavior, and adjust as you go.

- Treat data migration as a separate workstream because it is almost always harder than expected. Plan for data cleaning, transformation, and validation independently from the build itself.

- Decommission the legacy system once the new system has been running stably in production and all data has been migrated.

Pros

- Clean slate with no inherited technical debt.

- Full control over the architecture, technology stack, and design decisions.

- Opportunity to incorporate modern practices and capabilities from the ground up.

Cons

- The most expensive and time-consuming approach.

- High risk of scope creep if requirements aren't locked down early.

- Undocumented business logic in the old system frequently surfaces mid-project and causes delays.

- Requires running both systems in parallel for a period, which adds complexity and cost.

When to use. Consider rebuilding when the codebase is so degraded that engineers spend more time working around it than building on it, vendor support has ended, the existing technology stack is obsolete with no viable migration path, or the original developers are gone, and the current team can’t get a clear picture of how the system works.

5. Replace

Sometimes the best move is to sunset the legacy system entirely and adopt a commercial off-the-shelf (COTS) or SaaS product instead of modernizing what you already have.

Here are the steps to take when following this approach.

- Research your options by evaluating SaaS and COTS products that meet your needs. Consider factors like features, how well they integrate with your other systems, compliance, and cost.

- Understand your data before anything else, because moving years of data into a new system is challenging, and the mapping between old and new data structures is rarely straightforward.

- List every system that connects to the one you're replacing because each of those connections will need to be rebuilt or reconfigured for the new product.

- Run a pilot with a small group of users before rolling it out to everyone, so you can catch integration gaps and usability problems before they become bigger issues.

- Migrate the data by extracting, cleaning, and loading it into the new system. Test it properly before going live because data issues that slip through will cause headaches later.

- Give people time to adjust because getting teams to change how they work day to day is just as hard as the technical side. Budget for training and expect a settling-in period after launch.

- Keep the old system accessible for a while after go-live before fully switching it off, in case anyone needs to reference historical data or something unexpected comes up.

Pros

- Faster to implement than a rebuild since the product already exists.

- Vendor handles maintenance, updates, and security patches.

- Frees up your engineering team to focus on systems that are unique to your business.

Cons

- Less flexibility since you're working within the constraints of someone else's product.

- Data migration is almost always harder than expected.

- Vendor dependency means you're subject to pricing changes, product decisions, and the vendor's roadmap.

When to use. Replacement works best for standard business functions like HR, finance, or CRM, where a commercial product can do the job just as well, and maintaining a custom solution isn't worth the investment.

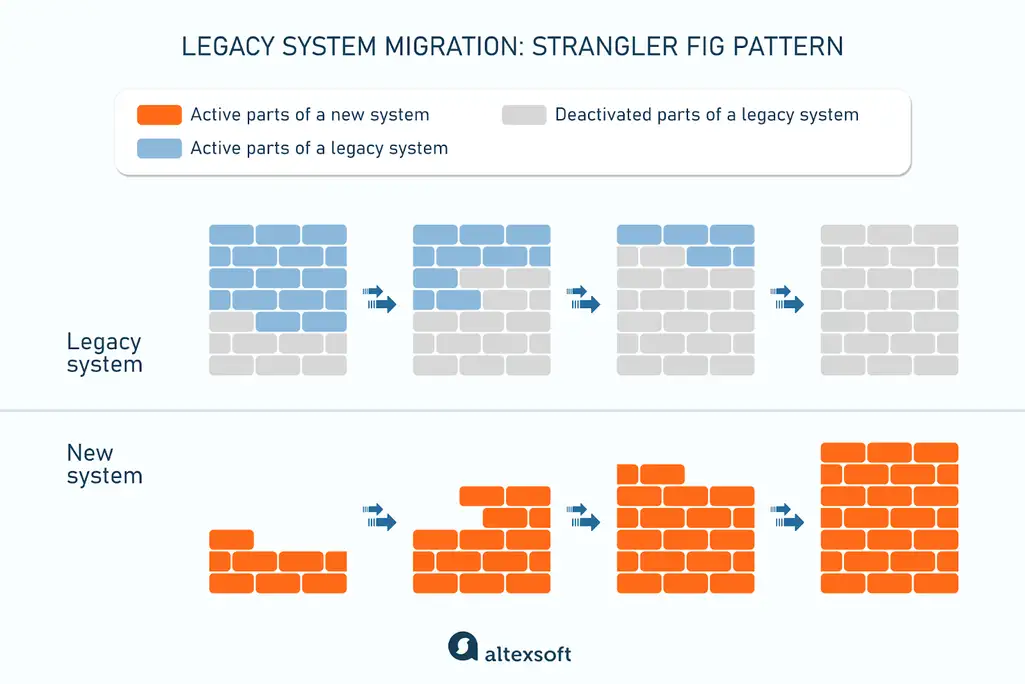

6. The strangler fig pattern

The strangler fig pattern involves building a new system alongside the old one and gradually routing more traffic to the new system until the legacy version can be retired. The name comes from the strangler fig tree, which grows around a host tree and eventually takes its place.

A simpler way to picture it: you're building a new house next to your old one and gradually moving your belongings across, room by room. You only abandon the old house once the new one is fully ready.

Below are the steps to implement the strangler fig pattern.

- Set up the routing mechanism that controls which system handles incoming requests from day one, even if all traffic still goes to the legacy system initially.

- Pick the first module to migrate by looking for something with clear boundaries, relatively low risk, and enough business visibility to show progress. Avoid starting with the most complex and depended-upon piece.

- Build the replacement module in the new system, while leaving the original intact.

- Route traffic progressively by starting with a small percentage of requests going to the new module. While doing so, watch closely for errors, performance issues, and edge cases before increasing the percentage.

- Sort out data synchronization early, as the systems must be in sync while they are both live. You can sync bidirectionally using a shared database or via an event stream between systems.

- Repeat the process for each module by working through the system component by component and validating each one before moving on.

- Decommission the legacy system once all modules have been migrated and validated, remove the routing mechanism, and shut down the old system.

Pros

- Zero downtime since the legacy system stays live throughout the migration.

- Lower risk because each new module is validated with real traffic before routing more to it.

- Problems surface early and in isolation rather than all at once during a big cutover.

Cons

- Running two systems in parallel increases infrastructure costs and coordination overhead.

- Data synchronization between the two systems is complex and needs careful planning.

- Takes longer than a full cutover.

When to use. The strangler fig pattern is the right choice when you can't afford downtime during migration and need a way to gradually migrate to a new system without disrupting users.

7. Encapsulation

Encapsulation wraps the existing legacy system in a modern API layer without changing the underlying code. Instead of touching the internals, you expose the system's core functions through interfaces that other applications and services can talk to. The legacy system keeps doing what it does, but it can now communicate with modern tools without requiring direct integration.

Think of it like putting a modern storefront on an old building. The structure behind it hasn't changed, but the entrance is now clean, accessible, and easy for people to use.

The steps to executing encapsulation include

- Identifying which functions and data the legacy system exposes that other systems need access to, because not everything needs to be wrapped, only the parts that other systems depend on.

- Designing the API layer that will sit on 741 top of the legacy system and decide how external systems will interact with it, including what data is passed in and out.

- Building the API wrapper without touching the legacy code itself, so the internal logic remains unchanged while the interface becomes modern and accessible.

- Testing the integration points by confirming that the systems communicating through the new API are getting the right data and behaving as expected.

- Building new modules around the encapsulated system over time, gradually reducing dependence on the legacy system as the new functionality grows.

Pros

- Fast and cost-effective compared to more invasive approaches.

- The legacy system continues to operate with minimal disruption.

- Preserves existing functionality and data while enabling integration with modern tools.

Cons

- Doesn't address any underlying technical debt or maintenance problems.

- The legacy system still needs to be maintained, which means ongoing costs.

- The API wrapper can become a bottleneck if the legacy system degrades further.

When to use. Encapsulation works best when the legacy system still delivers real value, the underlying code is in decent shape, and the main problem is that modern systems can't easily communicate with it.

The role of AI in legacy software modernization

At different stages of the development cycle and for specific tasks, AI coding tools truly provide value for engineering teams working on modernization. Below are some use cases where AI excels.

Understanding legacy codebases. AI tools can scan codebases and surface patterns, hidden dependencies, dead code paths, and performance bottlenecks quicker than an engineer would.

Generating documentation. By analyzing the code directly, AI can produce up-to-date, readable descriptions of existing modules and services, API endpoints and their behavior, and relationships between entities.

Code migration and translation. AI tools like Cursor and Claude Code can help translate code from one language or framework to another.

Generating test cases. AI can analyze code paths and generate a baseline test suite for legacy code that has none, which is one of the most common pain points in modernization work.

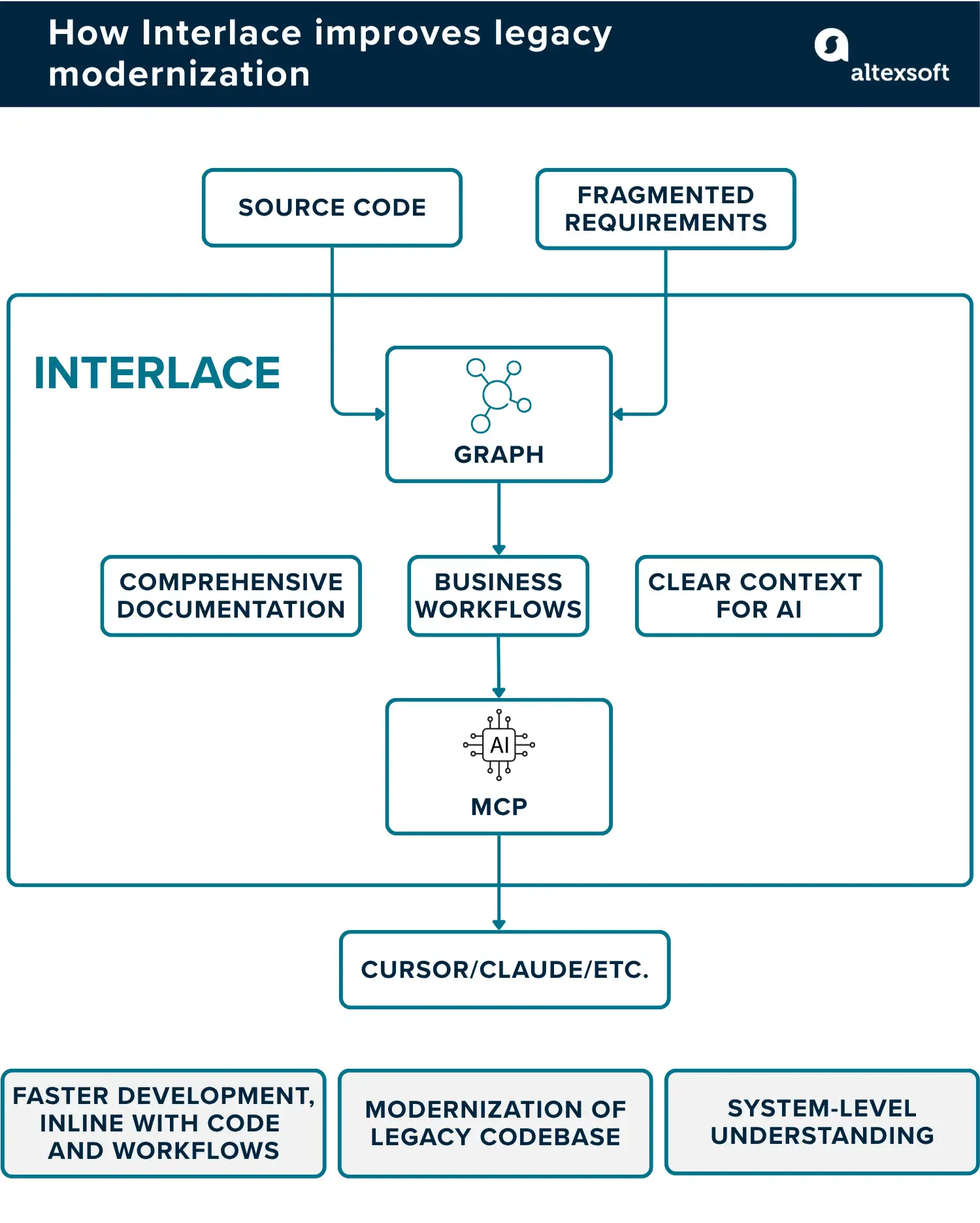

While effective, there’s one roadblock you’d hit when using AI coding tools to modernize legacy codebases: they can’t give you a complete, system-wide picture of what you're working with.

Most AI coding tools are session-scoped, meaning when a session ends, everything the model learned about the codebase during that session is gone. The next session starts from zero. On top of that, even the largest context windows aren't big enough to hold an entire enterprise legacy codebase at once, so the model is always reasoning from fragments rather than the full system.

One way to address these issues is by giving AI tools a richer, more persistent view of the codebase to work from, rather than relying on them to infer relationships from raw files on demand. That’s why we built Interlace, a graph-based AI engineering platform that unifies fragmented code, documentation, and product artifacts into a live system map.

By giving AI agents a persistent, queryable map of the entire system rather than fragments of it, Interlace makes it possible to get accurate, system-wide answers that standard AI coding tools simply can't provide.

Interlace helps our clients and us

- Trace exactly how components connect and what depends on what across the entire codebase, without spending days manually mapping it out.

- Reduce hallucination rates on modernization tasks by 40 to 50 percent compared to running the same tasks without it.

- Onboard new engineers faster by giving them a working mental model of the codebase through the entity catalog, rather than leaving them to read through the code directly.

- Generate a microservices extraction sequence that is grounded in how the system actually works, not assumptions.

Interlace works by processing the codebase through a structured pipeline, mapping file structure, dependencies, user flows, and API endpoints, then exporting everything into a knowledge graph that AI agents can query directly rather than piecing things together from raw files.

Legacy software modernization best practices

Legacy modernization is a large-scale initiative and requires proper planning. Here are some best practices to follow to help you achieve the best results.

Audit your systems before making any decisions. Document every system in your portfolio, map dependencies, and assess code quality. Also important: account for potential downtime that could occur due to activities like maintenance windows and the staff training time needed to bring people up to speed on new tools and processes.

Prioritize based on business impact. Score each system on two dimensions: how critical it is to operations, and how poor its current technical condition is. Systems that score high on both are your starting point. Also, look for quick wins that can show stakeholders early progress and keep them confident.

Choose the right strategy for each system. A customer-facing application that drives revenue needs a different approach than an internal reporting tool. Avoid applying the same legacy migration method across the board, and when needed, combine various ones.

Build a team that understands both the old system and the new architecture. You need solution architects, senior engineers familiar with the legacy codebase, product owners, and change management leads. The engineers who know the old system are critical to the project’s success, even if they're not writing the new code.

Build your testing foundation early. Legacy systems often have no automated testing strategies. Before touching anything, write tests that establish how the system currently behaves. Then, as you proceed, add other relevant tests, such as unit, integration, regression, smoke, and performance tests.

Keep human judgment in the loop when using AI tools. AI can tell you what code does, but not why it was built that way, which service boundaries make sense for your team, or whether a particular migration risk is worth taking. Those calls, along with things like what AI model is best for different tasks, belong to experienced engineers and should remain with them. Use AI to move faster, but maintain human-in-the-loop systems and other relevant guardrails along the way.

With a software engineering background, Nefe demystifies technology-specific topics—such as web development, cloud computing, and data science—for readers of all levels.

Want to write an article for our blog? Read our requirements and guidelines to become a contributor.