Airport operations—from baggage movement and boarding flows to gate coordination, staffing decisions, and managing connecting flights—generate large volumes of data every day. But raw data alone doesn't improve operations. Turning that information into something useful requires the right questions, the right metrics, and teams that understand how all these processes actually work on the ground.

In this article, we take a closer look at how data analytics is applied to airport operations, drawing insights from Scott R. Smith, Director of Global Airport Operations Performance & Execution at United Airlines.

How AI is transforming aviation operations

Key use cases of data analytics in aviation operations

Not every operational area responds to analytics in the same way. In some cases, data directly drives decisions; in others, it plays a supporting role alongside frontline judgment and process discipline. Below are areas where analytics can improve day-to-day airline operations—and, just as importantly, the customer experience that ultimately defines an airline’s success.

Baggage handling

Baggage handling is one of an airline's most complicated operations, with Scott describing it as “a ten-headed monster,” where analytics is essential to understand what’s happening at every step and to find the root causes of delays or errors: “There are just so many things that interact with a bag throughout its journey, making it incredibly complex. Data really helps us develop the right fixes.”

Baggage generates an enormous number of data signals, so the challenge is tracking the right ones and turning them into actionable insights. “There are about 6 to 8 million data points per day for baggage in United Airlines and, based on that data, we're looking for optimization opportunities to provide a better customer experience. We want customers' bags to arrive on time while also properly communicating the baggage’s location.”

This requires going beyond surface-level statistics, such as bag weight, to getting data-driven answers to these types of questions.

- Was a missed connection caused by scheduling constraints, weather disruption, or human error?

- Are passengers receiving clear, timely information that reduces unnecessary stress?

- Is the aircraft assigned to the route capable of handling high carry-on volumes?

- Are baggage handling resources deployed efficiently during tight turnarounds?

Baggage handlers don’t intend to lose passengers’ bags, but, despite their best attempts, disruptions could occur. In such cases, the impression that is left on passengers can be hard to recover from. When things go wrong, how an airline responds and communicates in that moment matters as much as the operational fix itself.

Scott shares his experience on this: “My bag had a tight connection through Denver and didn’t make the flight. I received an automated message saying it was put on a subsequent flight that was only 20 minutes behind. I was given two options: Wait and grab a snack in the airport and be compensated for that or head to my final location, where the bag will be delivered later that day. It was a perfect example of how much data we have to consider—not just where the bag is, but how to communicate that effectively so passengers aren’t stressed unnecessarily.”

The goal, ultimately, is for a passenger to never feel like they've lost control of their journey, even when the bags don't arrive with them.

Matching aircraft to route demands

But the data conversation around baggage doesn't stop at tracking and communication. It extends upstream to network planning, specifically, whether the right aircraft is being deployed on the right route. Some routes consistently generate heavy overhead baggage loads, which creates gate-checking bottlenecks that slow down boarding and put more bags at risk of delays.

For Scott, this points to a broader scheduling conversation: “Do we have the right plane on the right route? Some routes are notoriously very heavy with overhead baggage. United is retrofitting all of our planes to include one overhead bin for every passenger with the new extended bins, but those aren't complete yet. So network planning and scheduling probably has to look at it and say, if I have a route that is frequently gate-checking 30 to 40 bags, I should probably put a bigger bin aircraft on that route to speed up the boarding process.”

Passenger flow management

Passenger flow management covers everything from how quickly passengers move through the terminal to how efficiently they board the aircraft. Small delays at any point in that journey compound quickly and show up as delays that ripple across the network.

Analytics helps identify exactly where the friction is and build a proper solution. For example, sending notifications ahead about queues and wait times can help passengers make better decisions.

Airport staffing

Staffing is another area where data analytics can help, even if its impact may be lower here than in other sectors. Scott describes it as a domain where “gut feeling” and institutional knowledge still play the dominant role: “Analytics can confirm approaches and beliefs, but really, the airport workers understand the heartbeat of their location.” Experience and intuition guide decisions on workforce allocation and shift coverage.

Measuring success: Which metrics matter?

All diverse analytics use cases have one thing in common: They only work as well as the metrics behind them. You can build smarter dashboards, alerts, and prediction models—but if you measure the wrong thing (or rely on a KPI that no longer reflects today’s travel reality), the insights will still miss the mark. That’s why the next question is less about what analytics can do and more about how success should be measured: Which metrics actually matter?

Why some metrics don’t work

Some metrics airlines rely on today were created in a completely different travel world—and have simply persisted over time. “You start looking at those data points and ask what they were originally designed to address,” Scott says. “Chances are, they no longer mirror the problem you're trying to solve.”

One metric airlines and airports often lean on is time to gate—the simple question a passenger is really asking at check-in: “If I drop my bag now, when do I reach the gate?” The catch is that time to gate is usually a bundle of several inputs: check-in processing, walking time, and—crucially—security delay, often expressed as time through TSA (Transportation Security Administration, the US federal agency responsible for airport security screening).

“Time to gate is an industry standard. But think about it before 2001, when there was no TSA. There was no ‘take your shoes off’—it was a different travel world,” Scott explains. And that’s the problem: once TSA became a major part of the airport journey, the metric effectively got distorted from its original purpose. A measure that once reflected general “terminal pace” became heavily influenced by a security system airlines don’t control—and by procedures that have changed dramatically over time.

“Time Through TSA was originally designed to support TSA staffing, which is completely different from a customer and their experience of going through an airport,” Scott adds. Yet the composite metric is still used as if it were an accurate proxy for passenger experience.

What still works: Airlines can’t control the TSA, but they can use live security data to prepare passengers—and nudge better decisions before someone commits to the wrong line. As Scott puts it, ”Imagine you’re standing in a long line, and someone tells you there’s another checkpoint with no wait. Most people won’t move—not after they’ve already invested five minutes in the line. But the one person who does? They’re through in three minutes instead of thirty. That’s the gap United tries to close: not by changing TSA staffing, but by supporting passengers early with timely, actionable guidance.”

Aggregated metrics hide details

Popular normalized measures like NPS (Net Promoter Score) and CSAT (Customer Satisfaction Score) are designed to standardize feedback into an easy-to-track number that fits neatly on dashboards and makes trends comparable across routes, airports, teams, or time periods.

But that same simplicity can flatten nuance: Different passenger experiences, pain points, and service failures can end up producing a similar score, making it harder to see what’s actually driving the result—or what’s deteriorating under the surface.

“When a single number that paints things in a positive light is built from, say, 17 or more metrics, the underlying context that makes it meaningful disappears,” Scott says.

The alternative isn't abandoning these metrics altogether, but supplementing them with more granular, operation-specific indicators to get greater context. You could use a mix of Customer Effort Score, first contact resolution rates, real-time baggage tracking accuracy, gate capacity utilization, queue and wait-time monitoring, and sentiment analysis to build a fuller picture of where the passenger experience is actually breaking down and why.

New data streams: Focusing on social signals

The shift toward customer-centric metrics is supported by entirely new data streams that didn't exist a generation ago. Social media is one of them.

“In 1997, if you had a problem with your flight, you called the airline. Today, you post on social media: ‘United, what’s going on in Newark?’” Scott says. “That’s a data point too. Our social media team can flag a spike in negative sentiment at Newark Airport.”

That shift matters because social media can be another data source that can pick up problems in real time. When passengers start posting about a delayed baggage carousel or a gate announcement that didn't go out on time, their complaints are an additional signal.

The response side of this has become just as structured as the monitoring. Many airlines and airports have dedicated social media teams and systems that track mentions around the clock and quickly respond—publicly and privately—to complaints.

Southwest Airlines, for example, has a dedicated social media command center at its Dallas headquarters where a team monitors around 3,100 mentions every day across the social web. What makes it notable is that the command center is directly connected to their flight operations center, so the team can see delays and disruptions as they happen and reach out to affected passengers before they even think to complain. KLM also has a social media team of over 130 people handling complaints, comments, and compliments.

Data as a narrative: Telling the entire story

Operational metrics often feel like a scoreboard—but they can tell a richer story if interpreted correctly. Many teams fall into the trap of focusing only on the problems, but that doesn’t tell the whole story.

Scott suggests flipping that perspective: “Take baggage, for example. If you look at a station and say 15 bags missed their connection, that’s a gotcha metric. But when we started building these tools, we chose to show how many bags were saved. We saved 307 bags and missed 15.” The difference is significant. Moving 300 bags across terminals in tight connection windows is, as Scott describes it, “a monumental effort.”

This way, stakeholders can see the scale of effort required to run operations successfully and recognize the hard work of employees who keep the system moving. Scott adds, “No one wakes up in the morning planning to delay 15 customers but seeing that they saved 300 bags gives them satisfaction and a sense of accomplishment.”

This perspective helps humanize metrics—they become a narrative that reflects both wins and losses. Leaders can understand where processes succeed, where they fall short, and what adjustments are needed, all while providing context to the teams to enable them feel motivated rather than blamed.

Scott notes that this data-as-a-narrative approach also works in reverse. “If we only saved 100 bags and 20 were missed, that ratio shows a significant performance gap due to staffing issues or management oversight. Either way, the metric tells a complete story and points to what needs attention.”

How to approach automation: Take discovery seriously!

When faced with an operational problem, the instinct in many organizations is to build a dashboard. But jumping straight to automation—before clarifying what decision the tool should support, what the final output should look like, or which data points actually matter—is where many analytics efforts go wrong.

Scott flips that order. “I like to start with the manual process and come up with the end product before we develop the tool to get there. I will fight for another month of discovery all day, because this one month will save three months of development.”

By the way, the end product is not necessarily a digital tool—it can be a process, like “Having an employee counting something—for example, how many bags go from A to C.” Yet, if the decision is to build an app or a dashboard, it again takes a lot of discovery and getting feedback: “That extra two weeks or month spent getting the actual UI design into the hands of the folks who are going to use it is invaluable to me.” Adjustments can then be made in the requirements document before development even begins.

The reasoning comes down to how corporate engineering teams operate. “Once they deploy the tool, they move to the next one. They're not sitting around wondering if my team likes what they just built. So to get them to redo it takes another three months, because they have to finish their current project first.”

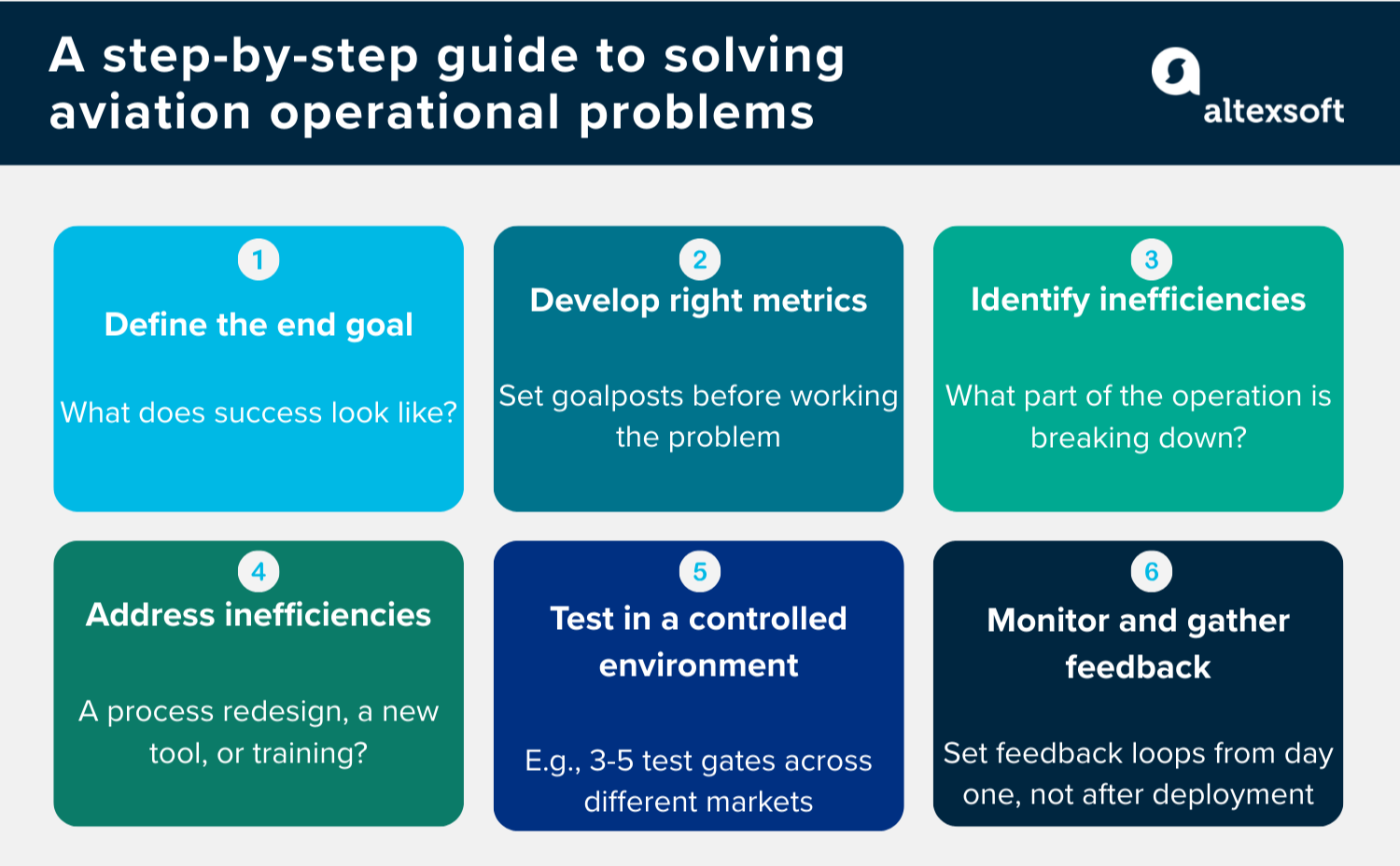

Now, let’s see how the analytical project can be implemented in practice.

From problem to process: How United Airlines builds a data-driven fix

With all the previous information in mind, let’s see how the actual analytical process can be implemented—based on United Airlines' experience.

Defining the end goal: What does success look like?

Before automating anything, the first step is defining a clear conclusion. In other words, what does success really look like? As Scott explains: “If we talk about boarding time, we start by naming the problem and the outcome we want. If boarding is taking too long, the goal is straightforward—reduce boarding time, for example, by 20 minutes.”

Developing metrics: How are we going to measure progress?

The next step is to answer the question: How are we going to measure boarding time? Is it the first person that scans to the last person that scans? Is it the last person to walk on the plane?

As Scott puts it, “There has to be a metric by which we drive success. If you don’t develop data points to focus on, it’s just a vague ‘yeah, boarding is better,’ but you don’t really know where performance changed or how.”

Identifying inefficiencies: What can be improved?

Once the metric is set, the next step is working backward to find the inefficiencies. In boarding, that might mean looking at whether the problem occurs at the start, middle, or end of the process, or whether it's tied to a specific passenger group, such as premium or first class. It might also point to process design issues.

Developing the solution: How can we get there?

Sometimes the fix is less about automation and more about people, training, and process design.

“Maybe it’s flight crew training,” Scott notes. “We want to make sure flight attendants are actively reminding passengers to keep the flow moving and get into their seats. We see people taking their time stowing bags, blocking the aisle. So we roll out updated crew training: Boarding time is a priority—keep things moving.”

Testing in controlled environments: What can be refined?

Instead of rolling out a new process throughout the entire network and risking disruption, United tests changes on a smaller scale. For the boarding time project, “We have dedicated gates where we trial new procedures.” By piloting ideas in controlled environments, the airline can identify what to refine—or discard—before applying the change more broadly.

From there, testing expands regionally. “We usually select three to five gates across different parts of the country because markets respond differently,” Scott says. “East Coast, Midwest, West Coast—we choose a few stations and compare results. Maybe it performs well on the West Coast and in the Midwest, but the East Coast struggles. Then we ask why. Was it a gate layout issue? Training gaps? A staffing constraint?” Examining those variables helps determine what needs adjustment before scaling further.

Once the regional results are clear, the initiative moves to a broader rollout. “We develop a deployment plan and introduce the change at a wider set of gates across multiple systems, then measure the impact,” he says. “If we see an average time savings of one minute—with the strongest results on the West Coast—we dig deeper to understand what drove that performance difference.”

Building feedback loops from day one: Is it working?

Instead of being one-and-done efforts, operational improvements require constant attention and refinement.

The final piece is the feedback loop that Scott stresses emphatically has to be built in from the beginning, not added later. “We set a feedback loop and say: At week one, three, and five, we'll hold office hours for 30 minutes and have people come in and say, ‘Hey, I like this, this works, this doesn't.’” If something is clearly not working, the team pulls back quickly. If the process holds up, then the team can scale: “After three months of continuous improvements with no hiccups, we can deploy to a larger set of groups.”

With a software engineering background, Nefe demystifies technology-specific topics—such as web development, cloud computing, and data science—for readers of all levels.

Want to write an article for our blog? Read our requirements and guidelines to become a contributor.